The Data is In: Nobody Wants an AI Boss

Jeff Whatcott · December 2, 2025

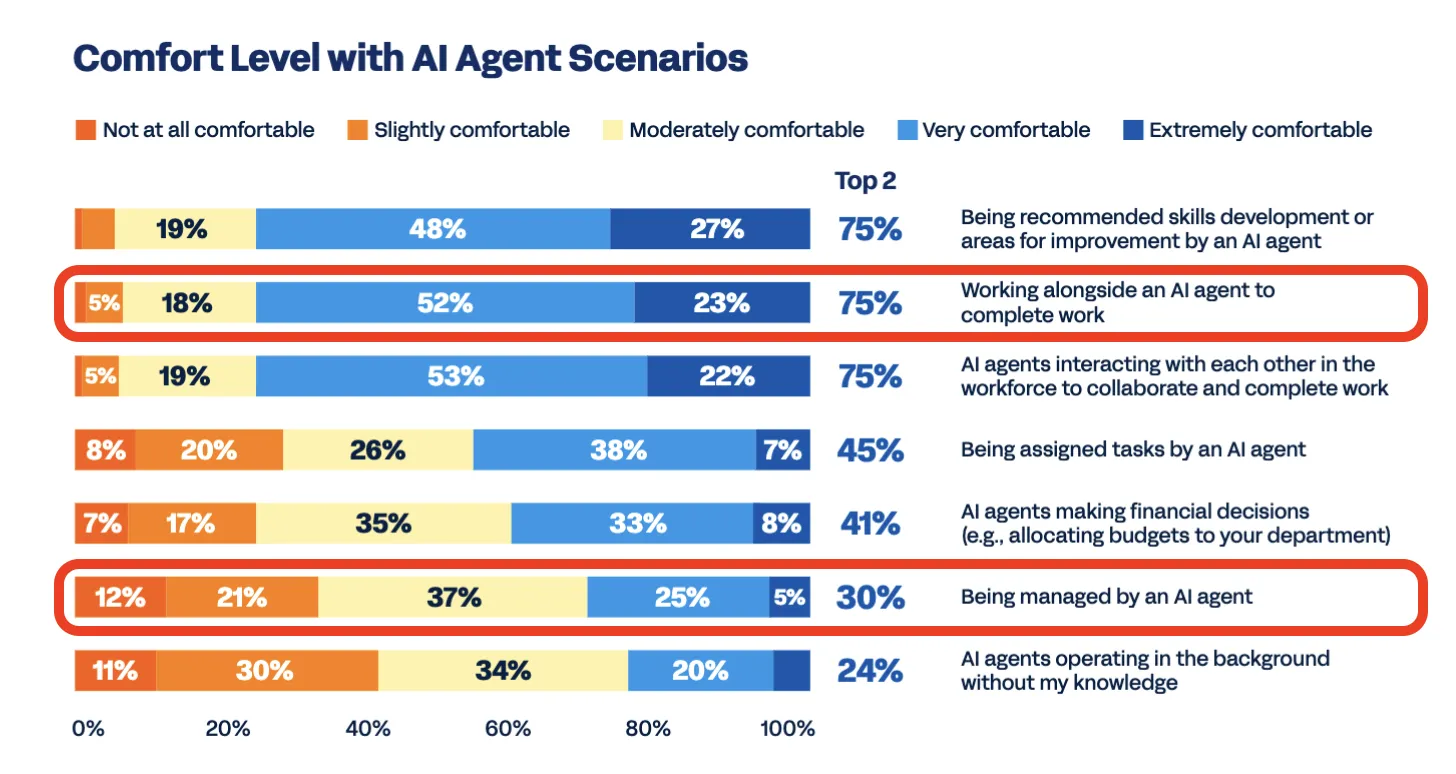

Seventy-five percent of employees are comfortable working alongside AI agents. But change one variable, and that support collapses.

A new global report from Workday reveals the exact boundary where adoption turns into revolt. When asked if they are comfortable being managed by AI, the number plummets to 30%.

Workday (2025). “AI Agents in the Workplace: A Global Study.”

Admittedly, “being managed” is an ambiguous phrase. Did respondents imagine a robot supervisor firing them, or just an algorithm sorting their inbox? The report doesn’t explicitly define it. But the data offers a clue: workers were significantly more comfortable with AI “assigning tasks” (45%) than “managing” them (30%).

This gap reveals that the resistance isn’t about the mechanics of work. It is about the locus of control. The more AI moves from a tool that enables action to an authority that restricts agency, the more humans revolt.

This signal is the most important variable in AI strategy right now. Organizations that treat these use cases as identical, assuming that because people liked the coding assistant they’ll accept the algorithmic manager, are walking into a trap.

The collapse in trust stems from a cognitive error known as “technosolutionism.” This is the belief that better technology automatically solves organizational problems. Leaders assume efficiency is the universal goal. But behavioral science shows that humans are wired for loss aversion. We fear the loss of autonomy far more than we value the gain in efficiency.

I experienced this firsthand in the late 90s while running the diamonds division of a luxury dot-com. I was paired with Keith, a legendary diamond buyer who had forgotten more about diamonds than I would ever know. To help scale his genius, I built a dynamic pricing model that used wholesale index data to set retail prices.

I loved the model’s elegance. Keith hated it.

He didn’t just fear becoming irrelevant. He feared the model’s opacity. It didn’t account for the “hot spots” he sensed through his deep industry network—trends that hadn’t hit the data yet. To him, the model was a constraint that forced him to leave money on the table.

We eventually found a rhythm. He learned to trust the model for the baseline, and I learned to build overrides for his intuition. But that initial friction was the same dynamic we see today: the resistance to a system that prioritizes mathematical optimization over human judgment.

We can map this territory by dividing AI deployments into two psychological territories.

The Psychological Territories of AI Adoption

When AI functions as a tool you hold in your hand, it acts as a lever. It amplifies human intent. This aligns with the behavioral principle of augmentation, using technology to free humans for higher-value work. This is the domain of IT automation, forecasting, and content drafting. It’s the zone where 75% of workers are comfortable working with AI.

When AI functions as a manager (allocating time, judging performance, or deciding pay), the research shows it is perceived as a leash. It restricts, or threatens to restrict, human intent. It triggers deep-seated fears of losing control to a “black box” that optimizes for metrics without understanding context. This is the domain of algorithmic hiring and automated performance reviews. It’s the zone where only 30% of workers are comfortable with AI.

The difference lies in the relationship between the human and the machine. One gives them superpowers; the other threatens to take away their voice and agency. We’re often told that the solution is “put a human in the loop” to make sure the AI isn’t making mistakes that hurt people. It sounds great, but it’s not so simple.

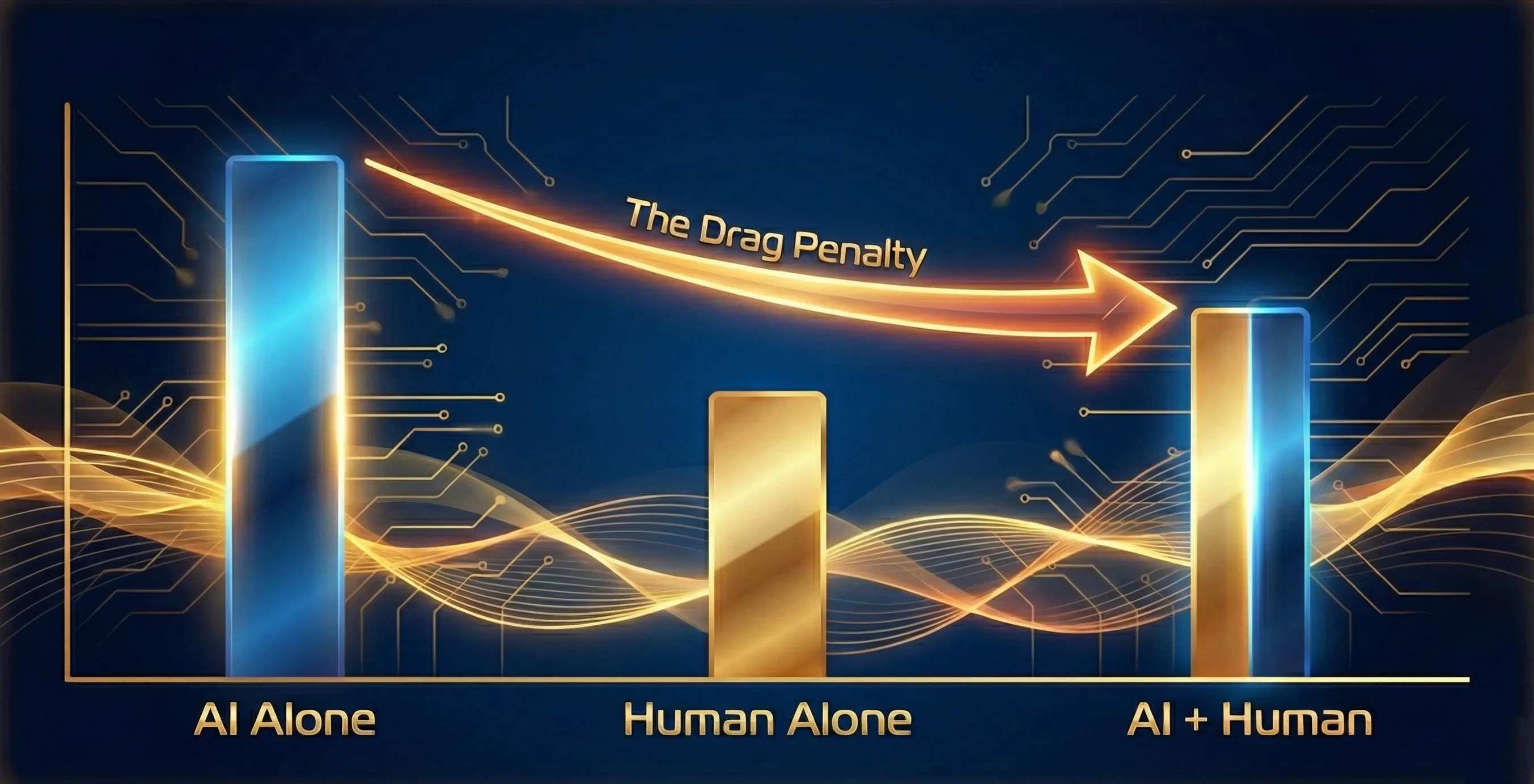

Recent research has surfaced a deeply counterintuitive truth: putting a human in the loop doesn’t guarantee better outcomes. A major meta-analysis in Nature Human Behaviour analyzed 106 studies and found that the critical variable is the human’s capability relative to the AI. When humans were more skilled than the AI at a task but perhaps less able to do it consistently at scale, human AI collaboration created better results than either could deliver on their own. But for tasks where the AI was fundamentally more capable than humans, adding human oversight actually degraded performance. A low-capability human overriding a high-performing AI doesn’t add helpful judgment. It adds drag.

The Drag Penalty: When Less Capable Humans Interfere with More Capable AI

We now have two different maps of the same territory. The Workday opinion data tells us where workers would prefer that we draw the seam between human cognition and AI cognitive instruments. The Nature research tells us what kind of person should be in the loop at that seam. Designing the boundary is necessary. Ensuring the human in the loop is equipped for the specific role required at the seam is what makes it all work.

Designing for this reality requires a counter-intuitive approach. Behavioral scientists Chokshi, Grenny, and Hale, writing in the Harvard Business Review, suggest that the goal shouldn’t always be a seamless experience. In fact, for high-stakes work, “seamless” might be the opposite of what we want.

When interfaces are too smooth, humans are biologically wired to switch off critical thinking and start rubber-stamping the AI’s instant and seemingly plausible output even when it is factually wrong. The researchers found that adding “intentional friction,” such as requiring explicit validation steps, can actually improve accuracy by requiring a qualified human to consciously scrutinize the AI’s work where the stakes warrant such focused attention.

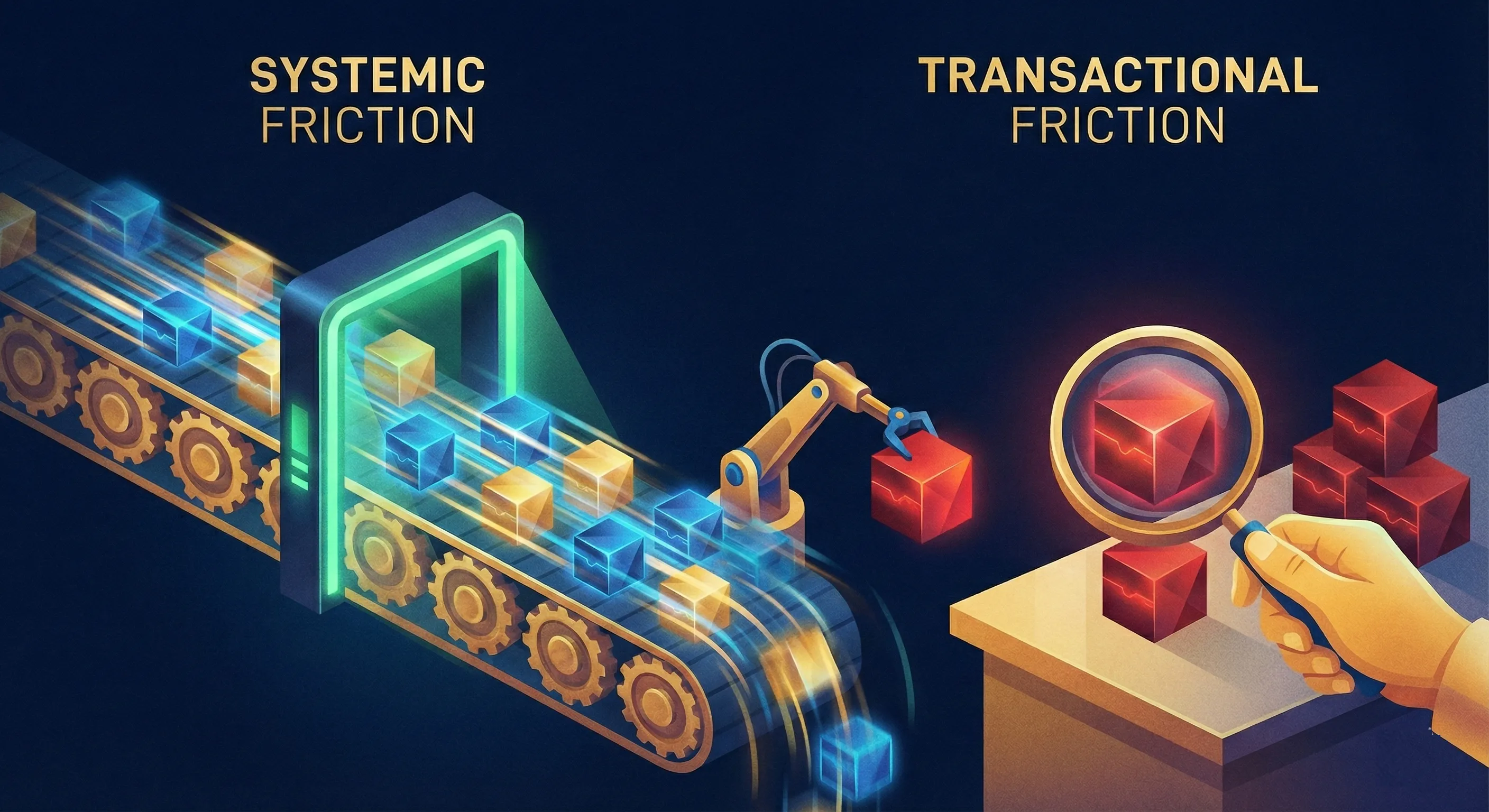

This means that the magic happens when we allocate friction strategically when needed instead of slowing everything down by compulsively double-checking everything.

We can think of this as a spectrum of friction.

Systemic Friction is for the 80% of high-volume, low-stakes work (drafting code, summarizing meetings). Here, we build technical guardrails into the AI system itself so the human doesn’t have to review every output and occasional errors are manageable. Systemic friction means spending a few more API calls and a few more tokens to produce a “good enough” result.

Transactional Friction is for the 20% of high-stakes decisions (hiring, firing, legal liability). Here, we deliberately insert a qualified human “speed bump” into the workflow so the human can fully assume the role that their ultimate accountability requires.

The Spectrum of AI Adoption Friction

This validates a core principle of our recent research and work: The seam is the strategy.

The “seam” is the visible, designed, and governed boundary where the AI hands off to the qualified human. Correctly structuring work on both sides of the seam is the difference between a high-performance team and a revolt. For low-stakes tasks, you rely on well-structured Systemic Friction (guardrails) and let the AI execute. This captures the leverage advantage. But for high-stakes tasks, you rely on Transactional Friction. Prompt the human to click “Approve.” Instruct the AI to explain its reasoning before a decision is final. This restores uniquely human agency/accountabilty and prevents the perceived AI leash effect. A well-designed seam is not seamless, by design.

Workday’s report argues this responsibility requires a new kind of leadership role—a “Chief Work Officer.” Unsurprisingly, for a company that sells enterprise workforce management software, the solution is a new C-suite buyer. But that seems like the wrong framing. Designing the seam between humans and AI isn’t a new specialty C-level role. It’s a fundamental aspect of management in the AI age. Every manager who deploys AI tools in their team’s workflow is making seam decisions, whether they realize it or not. The question is whether they’re making those decisions consciously, with a clear framework, or blindly, hoping for the best.

If you design for leverage—giving humans better tools to execute their own intent—you’ll find a workforce ready to adopt AI at speed. If you design for control—giving AI authority over human outcomes while still holding humans accountable—you’ll be fighting biology and psychology to make your system work. It won’t, and you’ll be left with a workforce that’s either resentful or demoralized or both.

The technology is ready. The workforce is willing. The only thing missing is the architecture to put the seam in the right place, put the right person on overwatch, and design the environment so they can focus.

References

-

Workday (2025). “AI Agents in the Workplace: A Global Study.”

-

Vaccaro, M., Almaatouq, A., & Malone, T. (2024). “When combinations of humans and AI are useful: A systematic review and meta-analysis.“ Nature Human Behaviour.

-

Chokshi, N., Grenny, J., & Hale, J. (2025). “How Behavioral Science Can Improve the Return on AI Investments.” Harvard Business Review.

More to read

Will AI Oversight Be the New Email Inbox Burnout?

Email promised to save time but created new burdens. AI oversight risks the same pattern unless leaders design verification into their workflows.

Most AI Projects Fail

Most corporate AI projects fail not because the technology is broken, but because organizations deploy AI without redesigning how work gets done. This article introduces a four-actor framework (Humans, Machines, Software, AI) for decomposing business processes and matching each action to the right actor. It is essential reading for leaders seeking AI strategy consulting that delivers measurable results, not expensive pilots.

The Knowledge Worker’s Last Refuge

AI is reshaping knowledge work, but some roles resist automation entirely. A dialogue reveals what judgment, accountability, and context AI can’t replace.

Where does AI belong in your processes?

Let us map the AI opportunities in your organization today.

Start a conversation