The AI Liability Squeeze

Timothy Robinson · February 18, 2026

Something critically important happened to your business on January 1st that you may not know about.

Several of the largest US-based insurance companies, including AIG, WR Berkley, and Great American built major exclusions into their core business insurance products, including General Liability (GL), Errors and Omissions (E&O), and Directors and Officers (D&O) policies, allowing them to decline coverage for damages incurred through the use of AI. (Jump to “What Can You Do About It?”)

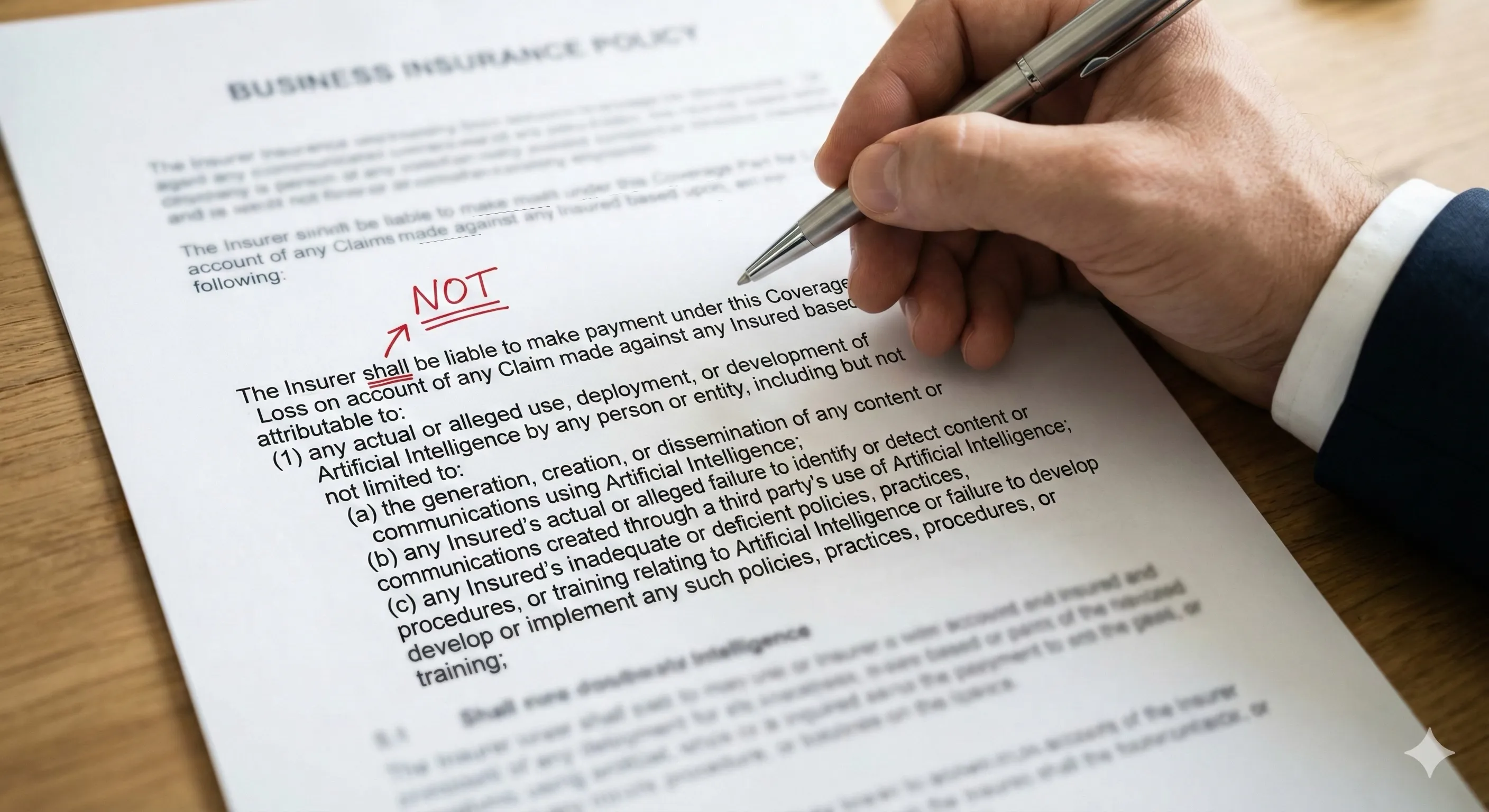

For example, the WR Berkley exclusion, effective on all renewals starting January 1, 2026, states, in part…

“The Insurer shall not be liable to make payment under this Coverage Part for Loss on account of any Claim made against any Insured based upon, arising out of, or attributable to:

“(1) any actual or alleged use, deployment, or development of Artificial Intelligence by any person or entity, including but not limited to:

“(a) the generation, creation, or dissemination of any content or communications using Artificial Intelligence;

“(b) any Insured’s actual or alleged failure to identify or detect content or communications created through a third party’s use of Artificial Intelligence;

“(c) any Insured’s inadequate or deficient policies, practices, procedures, or training relating to Artificial Intelligence or failure to develop or implement any such policies, practices, procedures, or training;…”

This new language, characterized by many in the industry as a new “absolute” AI exclusion, is likely to have huge legal and financial ramifications for companies just like yours.

An Insurance Industry Sea Change

So why is this happening? And why now?

It is happening because in 2025 there were more than 600 court cases involving AI hallucinations or fabrications brought to court in this country. And, while insurance companies can absorb an adverse ruling or two, what they cannot absorb is a wave of adverse rulings based on new and poorly understood technologies.

At the end of 2025, the insurance industry collectively sought guidance from Verisk ISO, a key insurance forms and policies provider. Verisk ISO’s Core Lines and Emerging Issues team subsequently published a series underwriting tools that allow US-based insurance carriers to routinely exclude business processes involving generative AI (GAI) from coverage with respect to both “bodily injury, property damage” and “personal and advertising injury”, including CG 40 47, CG 40 48, and CG 35 08[^1], presumably until the data on those 600 rulings starts coming in with enough signal to build actuarial tables for this new kind of risk.

Of course, the AI platforms themselves (OpenAI’s ChatGPT, Anthropic’s Claude, Google’s Gemini, etc.) all have all-caps disclaimers of liability that any and all users agree to. For example, ChatGPT’s terms say,

OUR SERVICES ARE PROVIDED ‘AS IS.’… WE DO NOT WARRANT THAT THE SERVICES WILL BE UNINTERRUPTED, ACCURATE OR ERROR FREE… YOU ACCEPT AND AGREE THAT ANY USE OF OUTPUTS FROM OUR SERVICE IS AT YOUR SOLE RISK.

Claude’s terms of use read,

THE SERVICES AND OUTPUTS ARE PROVIDED “AS IS” AND “AS AVAILABLE” WITHOUT WARRANTY OF ANY KIND… ANTHROPIC DOES NOT WARRANT, AND DISCLAIMS THAT, THE SERVICES OR OUTPUTS ARE ACCURATE, COMPLETE OR ERROR-FREE.

The other platforms all have similar language that disclaims any platform liability as a term of use. And no courts have, as yet, shown any interest in questioning the legitimacy of those disclaimers or pursuing liability for LLM errors with the LLM platforms themselves.

The Legal and Regulatory Outlook is Murky at Best

In the meantime, despite the deluge of AI hallucination suit filings mentioned above, the US legal landscape is still muddled. The long list of high profile wrongful death suicide cases like Montoya v. Character Technologies (2025) and Raine v. OpenAI (2025) are mostly still in the early stages of discovery or have been settled privately (as with Montoya v. Character Technologies [2024]). The most prominent settled case mentioned in blogs and substacks, Moffatt v. Air Canada, clearly established Air Canada’s direct responsibility for the error made by a chatbot on its website, but that ruling was from a Canadian Tribunal, not a US court. Another prominent case, Wolf River v. Google (2025), is still being litigated. Several early rulings suggest that the court is open to holding Google responsible for demonstrated financial harms attributed to an error made by its Gemini-powered AI Overview. But, ironically, that ruling would actually be an example of holding a platform responsible for errors made by its LLM.

While wrongful death and defamation cases grab headlines, the quieter but potentially more costly threat lies in some of the many algorithmic discrimination cases that have been brought. In Mobley v. Workday (2023), the federal court which ruled against all motions by attorneys representing the defendant, Workday, to dismiss the case based its rulings on the theory that an AI vendor can be treated as an “agent” of its employer‑clients under US anti‑discrimination law. The plaintiff alleges that Workday’s AI‑powered screening tools systematically rejected candidates based on race, age, and disability, and the court held that if those allegations prove true, the vendor itself could face direct liability. The framing again signals that US courts are willing to treat algorithmic decision‑makers as part of the employer’s legal apparatus, not as merely neutral tools in the background. But also, again, this seems to be an example of the court holding the platform, or AI provider, liable for errors made by the AI, not the employers using Workday tools.

Nevertheless, there are some other rulings establishing the precedent of the courts’ and regulators’ willingness to hold US companies liable for harms attributable to their own use of AI tools. In FTC v. RiteAid (2023), the FTC took enforcement action against RiteAid, not the AI vendor, for deploying facial recognition software against potential shoplifters that produced thousands of false positive alerts that disproportionately affected customers in majority non-white neighborhoods. Similarly, the FTC during the Biden administration ruled against DoNotPay, a company that marketed “the world’s first robot lawyer” finding that they never tested whether its AI outputs were comparable to a human lawyer’s work and never hired attorneys to verify the quality of the outputs. But the FTC during the current administration confirmed and finalized that finding in February of this year and assigned the penalty of $193,000.

States Are Struggling to Fill the Federal Vacuum

At the state level, Colorado’s AI Act (SB 24-205), signed into law in May 2024 and effective June 30, 2026 (delayed from its original February 1 start date by SB 25B-004), creates explicit obligations for both “developers” and “deployers” of high-risk AI systems—those that make, or are a substantial factor in making, “consequential decisions” affecting areas like employment, lending, housing, healthcare, insurance, and education. Developers must provide deployers with detailed documentation on training data, known discrimination risks, and intended uses. Deployers, in turn, must implement a risk management policy and program of their own, complete impact assessments at least annually, notify consumers before a consequential decision is made, and provide an opportunity to appeal adverse decisions with human review where technically feasible. Notably, the law establishes both a rebuttable presumption of reasonable care for both developers and deployers who comply with its requirements, and a separate affirmative defense for those who discover and remedy violations through internal review, red teaming, or user feedback and who otherwise follow a recognized AI risk management framework (such as NIST’s AI RMF or ISO/IEC 42001). Enforcement lies exclusively with the Colorado Attorney General, with no private right of action. However, the law faces potential federal challenges following Executive Order 14365 in December 2025, which cited it as potentially “onerous” and directed the FTC to review its impact on AI model outputs.

But Colorado is not alone. Texas enacted the Responsible AI Governance Act (TRAIGA), effective January 1, 2026, which takes a fundamentally different approach by focusing on intent—holding developers liable only if they specifically intended to manipulate behavior or discriminate. Illinois amended its Human Rights Act with HB 3773, effective January 1, 2026, to treat AI-driven employment discrimination as a civil rights violation, regardless of intent. Perhaps most significantly for liability insurance, California passed AB 316, which explicitly prohibits “a defendant who developed, modified, or used artificial intelligence, as defined, from asserting a defense that the artificial intelligence autonomously caused the harm to the plaintiff.” Furthermore, a new wave of “chatbot safety” laws in California (like SB 243) and pending legislation in states like Washington and Oregon are introducing regulatory requirements on social media and chatbot campanion companies that could empower individuals—not just Attorneys General—to sue directly for harms pursuant to the use of AI.

Elsewhere, judges are sanctioning lawyers for unverified AI outputs in their legal filings. In a growing line of US cases, courts have discovered “false case citation hallucinations generated by artificial intelligence”^4 in briefs and motions, imposed monetary penalties, and in some instances, even issued public reprimands. One recent decision contrasted two attorneys who signed the same AI‑research-tainted filing: one lawyer who quickly owned the error and tightened supervision received a lighter response, while another lawyer who blamed a subordinate and implemented a blanket “zero‑tolerance AI policy” was personally fined $4,000 and ordered to circulate the sanctions order and her new AI policy to the state bars where she practices and to append those documents to all current cases where she is representing clients.

Across more than a hundred documented incidents, the pattern is consistent: courts are not banning AI, but they are insisting that professionals retain a non‑delegable duty to verify whatever AI produces.

De-Facto AI Use Regulation

Whereas federal and state legislative remedies and government regulatory frameworks (like the EU AI Act) are slow moving, limited by jurisdiction, and require the building and deployment of enforcement architecture, changes in liability coverage and subsequent civil liability rulings have immediate and universal implications, effectively translating vague governance concerns into substantial financial liabilities for companies like yours.

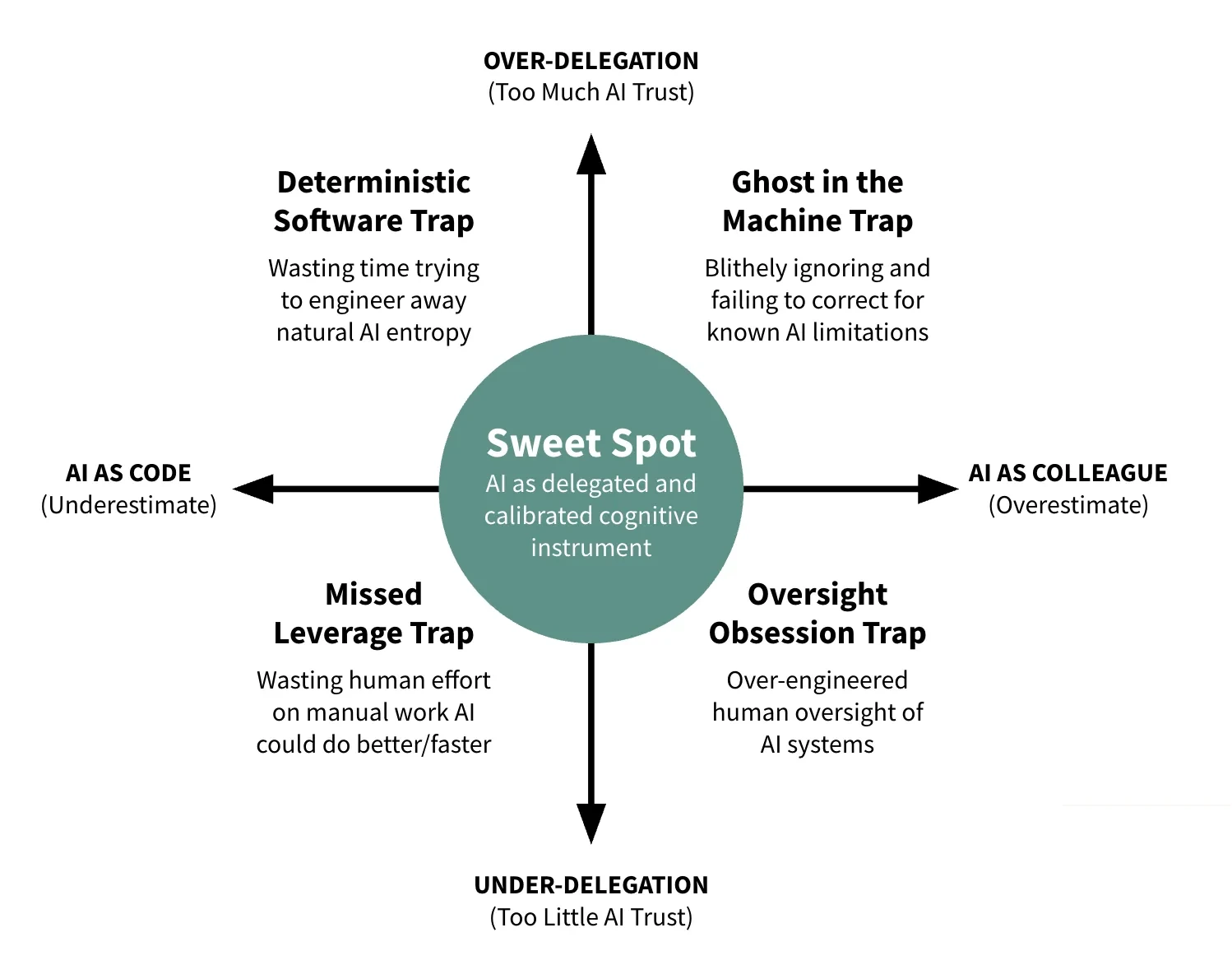

If it wasn’t clear before, it is certainly clear now that the main question you need to answer is not what can AI do for your company, but what should AI do for your company given proper governance constraints and how do you build those constraints?

What Can You Do About It?

Here are some steps you can take right now (the sooner the better).

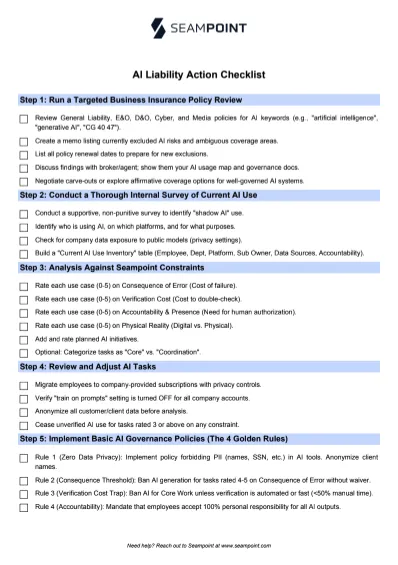

Step 1: Run a Targeted Business Insurance Policy Review Looking for Changes to AI Liability

Have your internal or external counsel or your insurance broker review all General Liability, Errors & Omissions, Director & Officer, Cyber, and Media policy language looking specifically for any mention of “technology risk,” “artificial intelligence,” “algorithmic,” “automated decision,” or “generative AI” or any mention of ISO-style endorsements, like “CG 40 47,” “CG 40 48,” “CG 35 08.”

Create a short memo listing any AI risks that are currently excluded or where coverage may be ambiguous. Don’t neglect to ALSO list all policy renewal dates so you’re ready to review any new policy inclusions.

If you DO find any exclusions, work with your broker or with the insurance agent directly to call attention to these policies, show them your internal AI usage map (see Steps 2 and 3 below) AND your internal AI governance documents (see Step 5 below) and ask which exclusions could be narrowed in light of those documents, including carve-outs for low consequence applications or for fully verified outputs.

Ask if you can qualify for any affirmative coverage for well-governed AI systems. Negotiate terms for a separate policy, if necessary. Or, search for insurance agencies that are currently offering coverage for well-governed AI workflows and explore shifting your carrier as needed.

Step 2: Conduct a Thorough Internal Survey of Current AI Use

There is almost no question that your employees are already using AI to help them with their work tasks. This is called “shadow AI” and it could be exposing your entire organization to liability you don’t know about. If you think you don’t have this exposure because you have a “No AI” policy, keep in mind that mere prohibition doesn’t stop use, it just drives it underground where it cannot be governed.

Have an officer of the company conduct a thorough survey of current AI use. Try hard to make your inquiries seem supportive and constructive, not punitive in any way, to increase the likelihood of compliance. Try to identify who is using AI, on which platforms, and for what purposes. Try to prioritize ongoing workflow usage over one-off usage.

Pay particular attention to any company data that is being exposed to public models without the appropriate privacy settings! Also note any AI uses that produce customer-facing documents, as this is one of the most risky moves you can make.

Build a “Current AI Use Inventory” table with the following columns:

-

Employee

-

Department/Supervisor

-

AI Platform (i.e. MS Copilot, ChatGPT, Salesforce AI, Windsurf Coding Assistant, etc.)

-

Subscription Owner (i.e. free public use, personal subscription, company subscription, etc.)

-

Data Sources (i.e. are they using any company data or documents to ground the AI outputs)

-

Accountability (i.e. if something goes wrong, who is responsible?)

Step 3: Run an Analysis of All Current and Planned Use of AI Against Seampoint’s Four Constraints

Add four additional columns to your “Current AI Use Inventory” table (see Step 2 above) where you will rate each current use of AI tools on a scale from 0 to 5 across each of the following Seampoint governance constraints:

-

Consequence of Error. What are the costs when AI is wrong? A poorly worded internal email is likely benign. Payment processing errors are serious but reversible. Erroneous engineering calculations for a building project can cause catastrophic failure. The level of consequence determines how much AI risk is inherent in the proposed activity. A “0” in this column indicates no consequence of error and a “5” means drastic consequences such as risk of physical harm or significant reputational damage to the company.

-

Verification Cost. How costly is it to double check the AI’s output? AI-generated software code can be verified through affordable automated unit tests, whereas AI-generated medical diagnoses could require a highly paid physician to re-examine the entire case, which is potentially more expensive than just having the physician do the initial diagnosis themselves. A “0” in this column indicates that AI outputs are easy to verify (such as a Subject Matter Expert reviewing outputs while researching their own field) and a “5” indicates that verification costs exceed the efficiencies gained by using AI in the first place.

-

Accountability and Presence. Does the task require human authorization or authentic human presence? As discussed above, a lawyer can’t delegate responsibility for their own legal counsel. Other tasks require authentic presence—counseling, leadership, negotiation—where the human relationship is the point. A “0” in this column indicates that there are no accountability or human presence requirements for this task whereas a “5” indicates that human authorization is legally required or that building human relationships are the entire point of the work task and therefore, AI should ONLY have an advisory role.

-

Physical Reality. Does the task require manipulating atoms in unstructured environments? Software scales infinitely, but physical intervention in unstructured physical environments is constrained by safety and physics despite recent gains in robotics for highly structured environments (like the Amazon shipping floor or the Ford auto frame construction floor). A “0” in this column indicates that the work task is purely digital and a “5” indicates that the work task is purely physical and can’t be done by robotics.Such tasks likely won’t be listed on your “Current AI Use Inventory” table in the first place, but if they are, make sure that AI is only being used far in the background, such as for simulation based training, not for execution of the actual task itself

Important: Add additional rows for any planned AI initiatives in your organization and fill out all the columns including Accountability and the Four Constraints ratings.

For extra credit, you could add another column to the right that simply assigns each internal AI task as either a “Core” work task, or a “Coordination” work task. Coordination work tasks are the work we do to coordinate our core work with others in the organization, including emails and meetings with bosses, colleagues, and external partners or vendors. There are fairly well established tools and protocols for coordination work, unlike core work tasks which can be characterized by highly specialized applications. Still, if your coordination work has high consequence and you are using AI regularly, it’s better to list it than not.

Step 4: Review and Adjust Your Current AI Use Accordingly

Based on the above analysis, take steps to correct the obvious issues. This could include…

-

Getting employees to use company-provided subscriptions rather than personal subscriptions or free versions that lack the privacy controls.

-

Verifying that all company-provided subscriptions have the “train on prompts” setting turned off.

-

Taking steps to anonymize any customer or client data before using AI to analyze it.

-

Ceasing the use of unverified AI outputs for any work tasks rating a 3 or above across any of the four constraints or for the production of any customer-facing materials.

Step 5: Put Some Basic AI Governance Policies in Place

Your governance policy doesn’t need to be a 50-page legal document. In fact, if it is, no one will read it. Instead, you need a set of operational constraints that map to the reality of how AI actually fails.

Based on the Seampoint “Distillation of Work” framework, here are some recommended basic AI Governance policies that you should implement immediately to take to your insurers and help protect your balance sheet.

Note: Be sure to have your internal or external counsel review these or any other policies you draft before publishing them to your employees or approaching your insurance company or brokers with them.

Rule 1: The “Zero Data” Privacy Policy

-

Policy: “No personally identifiable information (PII) regarding employees or customers—including full names, social security numbers, driver’s license or passport numbers, financial account numbers, email addresses, phone numbers, or home addresses—may be entered into any AI tool. Furthermore, all client or customer company names must be anonymized (e.g., ‘Client A’) before analyzing any sensitive information such as sales data or strategic plans.”

-

Why: Feeding sensitive data into public models violates privacy laws, exposes trade secrets, and can inadvertently train the model to leak your proprietary information to competitors.

Rule 2: The “Consequence of Error” Threshold Policy

Use the “0 to 5” consequence scale you built in Step 3 to draw a hard line.

-

Policy: “No AI generation allowed for any task rated 4 or 5 on Consequence of Error without a signed human-in-the-loop waiver.”

-

Why: If an error causes physical harm, financial ruin, or massive reputational damage, you cannot insure away that risk. These tasks must remain Human-Only or Assisted (where AI generates options, but the human is the sole decider).

Rule 3: The “Verification Cost” Trap Policy

-

Policy: “AI may not be used for Core Work unless there is a deterministic, automated way to verify the output (e.g., code that compiles, data that matches a source record) OR the employee can demonstrate that verification takes less than 50% of the time required to perform the task manually.”

-

Why: This prevents your workforce from drowning in the “slop” of checking bad AI outputs, and it protects you from the liability of unchecked hallucinations slipping into customer-facing products.

Rule 4: Accountability is Non-Delegable

-

Policy: “Any employee who deploys AI output—whether in an internal memo, an advertising campaign, or a customer email—accepts 100% personal responsibility for its accuracy. ‘The AI made a mistake’ is not a valid defense in this company.”

-

Why: You must legally and operationally anchor the liability to a human. When employees know they cannot blame the bot, their usage behavior changes instantly from reckless speed to careful augmentation.

Next Steps

With these first steps behind you, it’s time your organization takes both the threats and opportunities posed by AI seriously. Never before has a business tool been available to your employees and operations that not only processes data, but can help analyze the implications of data, recognize patterns, and recommend courses of action. This is what we call, The Great Refactor. You and your team need to begin thinking seriously about which business tasks are better suited to human capabilities and limitations, software capabilities and limitations, and AI capabilities and limitations.

Strategically pick governance-safe pilots that rate low across the four constraints where you can demonstrate the value of AI-assisted workflows to your employee base and begin to develop core competencies. Using the Four Constraint ratings as a guide, formalize your Verification SOPs for key AI workflows and outputs.

Draft plans for company-wide AI Fluency training. Along with basic orientation to the available tools, consider building training around AI best practices like…

-

Grounding

-

Principle: Give AI the documents it needs to “ground” outputs in known facts

-

Why: RAG reduces hallucinations by 50-90%

-

-

Regenerating

-

Principle: Re-run the same prompt from different angles or platforms and compare outputs

-

Why: Verifiable patterns will tend to emerge from multiple approaches to the same question

-

-

Verification

-

Principle: Citations are leads, not proof—ask for them but double check

-

Why: AI-generated quotes and facts are hallucinated at predictable rates and currently, some 18-55% of AI citations are fabricated

-

-

Flagging Uncertainty

-

Principle: Remind the LLM to say “I don’t know” and ask clarifying questions

-

Why: LLMs rarely admit uncertainty or ask questions—you must explicitly invite both

-

-

Human Decision Making

-

Principle: AI drafts, humans decide what to do

-

Why: Accountability for adverse outcomes cannot be delegated to AI

-

Research how other companies in our vertical are using AI. Continue to prompt your executive team to think about and discuss how the availability of abundant and cheap knowledge production paired with verification procedures could reshape how you generate value in the marketplace.

Conclusion: The Window is Closing

Given the legal and financial liabilities discussed in this article, you might be tempted to ask whether the safest path forward is simply to cease using AI entirely. The answer is unequivocally no.

Stopping AI use is not a viable strategy, it is a guarantee of obsolescence. The availability of abundant and cheap knowledge production, when paired with robust verification procedures, will reshape how value is generated in the marketplace. AI is a business tool that can process data, analyze implications, recognize patterns, and recommend courses of action. You don’t want to be left behind. And, realistically, you can’t avoid it because your employees are already using AI in unsupervised ways every day (called “shadow AI”).

The choice, therefore, is not between using AI and not using AI. The choice is between ungoverned, risky use and responsible, well-governed use. By implementing the governance policies and constraints outlined above, your organization can strategically pick “governance-safe pilots”, build AI fluency, and leverage verifiable AI capabilities into your core operations and business model. The companies that thrive in 2026 and beyond will be the ones who figured out how to use AI responsibly.

The “wild west” era of free AI experimentation ended on January 1, 2026. The insurance industry has signaled that they will no longer subsidize the learning curve.

Need Help With This? Navigating this new liability landscape can be complex. If you need assistance with your AI Use Inventory, Governance Constraints Analysis, or setting up these policies, the team at Seampoint can help. Reach out to us at https://seampoint.com/contact/ today.

Download the “AI_Liability_Action_Checklist” PDF

[

](https://drive.google.com/file/d/1XS9ocRkIrMz1FkmjOp-zveb7ee-JP9p2/view)

More to read

AI 2026: Moving Beyond the Hype Cycle

The first wave of AI excitement is fading as organizations hit real limits. Why 2026 is the year leaders confront what AI can and cannot do.

AI Transformation: Strategy vs. Operations

AI transformation looks different from the boardroom than from the shop floor. Bridging the gap between strategic vision and operational reality.

The Great Refactor

Since late 2022, AI has been rewriting the cognitive code of work. This is not automation—it is a structural refactoring of how knowledge work gets done.

Where does AI belong in your processes?

Let us map the AI opportunities in your organization today.

Start a conversation