AI 2026: Moving Beyond the Hype Cycle

Alan Berrey · December 28, 2025

For most people, the past two years with AI have been nothing short of astonishing. Suddenly, anyone could ask a computer to write, summarize, code, analyze, or brainstorm. Tasks that once took hours collapsed into minutes. The leap in capability felt revolutionary and discontinuous. This was not incremental software improvement. It was something fundamentally new and different. But now, the shine is wearing off.

People are discovering that AI gets things wrong. Not small things, but obvious things. It hallucinates facts. It invents sources. It confidently delivers answers that are verifiably false. Users are learning, sometimes the hard way, that AI can help, but only so much. And only if you are paying attention.

That experience is triggering a new set of questions.

-

Was the AI industry overhyped?

-

Will AI really deliver trillions of dollars in value?

-

Will millions of people actually be replaced?

These doubts are not coming from skeptics on the sidelines. They are coming from people who use AI every day.

We Have Seen This Movie Before

In my lifetime, banking has gone through a complete interface transformation.

We moved from teller-dominated interactions to ATMs, then to online banking, then to mobile apps. Entire categories of transactions vanished from the physical branch.

And yet, the number of bank tellers employed today is roughly the same as it was decades ago. The work changed but the role did not disappear. Routine transactions were absorbed by machines. What remained was higher-value human work: resolving problems, building trust, handling exceptions, and advising customers when things went wrong.

This pattern matters, because it is analogous to what we are starting to see with AI more generally.

The Agent Moment That Wasn’t

A recent New Yorker article by Cal Newport points to a telling moment in 2025. AI agents, long promised as the next great leap, failed to meet expectations. These systems were supposed to act independently in the world, booking travel, managing workflows, and replacing large swaths of human effort. Instead, they stalled.

They got stuck. They made compounding errors. They struggled with basic judgment and coordination. Newport’s argument is not that AI is useless, but that the gap between promise and practice is real and persistent.

This gap is not accidental. It is structural.

The Friction We Are Running Into

We are now encountering the friction that is built into AI adoption. Not friction caused by slow organizations or resistant employees, but friction rooted in what AI fundamentally is and is not.

We will not fully realize the benefits of AI until we fully understand its limitations.

There are four things AI is not good at.

First, AI is not accountable

AI experiences zero consequence. When it makes a mistake, nothing happens to the model. There is no reputational loss, no legal exposure, no moral weight, and no corrective memory shaped by lived experience. The cost of error is always absorbed by a human or an institution. This single fact forces a hard boundary on delegation. Any task where failure carries legal, financial, safety, or ethical consequences must retain a human owner. AI can advise, draft, or assist, but it cannot be allowed to act autonomously where accountability matters, because accountability cannot be transferred.

Second, AI has limited wisdom

AI does not possess judgment. It does not understand context in the way humans do, and it does not recognize when a situation falls outside its training. When it encounters novelty, ambiguity, or conflicting signals, it does not pause or escalate. It guesses. Often the guess sounds plausible. Sometimes it is catastrophically wrong. Human judgment is not just pattern recognition; it is the ability to know when the pattern no longer applies. That capability remains stubbornly human, and it is precisely what is required in high-stakes, edge-case-heavy work.

Third, AI has no personal authenticity

AI cannot genuinely(truly) represent a person or an organization in moments that require trust, moral authority, or human presence. It can simulate warmth, but it cannot stand behind a promise. It cannot bear responsibility in a negotiation, deliver difficult news with lived empathy, or build long-term trust through shared experience. In many roles, the human is not a delivery mechanism for information. The human is the product. When authenticity is the value being delivered, automation hits a hard limit.

Fourth, AI is a statistical machine

AI does not know what is true. It predicts what is likely. Most of the time, this distinction does not matter. Some of the time, it matters enormously. The probabilistic nature of AI means that error is not a bug to be eliminated, but a permanent feature to be managed. As tasks become more complex and multi-step, small errors compound. This forces verification back into the workflow, often at significant cost. If verifying the output requires a human to re-derive the answer, the economic logic of delegation collapses.

AI Limitations Create Friction

Because of these intrinsic limitations, AI introduces four important points of friction into any real deployment.

Accountability friction. Someone must remain responsible for outcomes, which limits how far authority can be delegated.

Judgment friction. Novel or ambiguous situations require human intervention, preventing full automation of complex work.

Authenticity friction. Roles that depend on trust, presence, or moral agency cannot be handed off to machines.

Verification friction. Outputs must be checked, audited, and bounded, often erasing the efficiency gains of automation.

These frictions are not edge cases or temporary obstacles. They are foundational constraints. They determine where AI can safely operate, where it must remain supervised, and where it cannot be deployed at all. Any serious discussion of AI’s economic impact that ignores these frictions will consistently overestimate both speed and scale.

Understanding these limits is not pessimism. It is the prerequisite for using AI well.

The Real Work Ahead

The cooling of the AI hype cycle is not a signal to slow down. It is a signal to grow up.

The past two years proved that AI is powerful. The next phase will determine whether it becomes truly valuable. That outcome will not be decided by bigger models or better demos. It will be decided by whether people and organizations learn to work with AI as it actually is, not as they wish it to be.

For individuals, this means developing a more mature mental model of AI. Treat it neither as an oracle nor as a toy. Understand where it accelerates thinking and where it quietly introduces risk. Learn when to rely on it and, just as importantly, when to stop it. The most valuable skill in an AI-rich world will not be prompting. It will be judgment.

For companies, the call to action is sharper. Stop asking where AI can replace people and start asking where friction lives. Map accountability. Identify where judgment is required. Recognize where authenticity is the product. Measure verification cost honestly. These frictions are not signs of failure. They are the terrain on which durable advantage is built.

Organizations that ignore friction will remain stuck in pilots, chasing incremental efficiency and wondering why scale never arrives. Organizations that design for friction will concentrate human effort where it matters most and deploy AI where it actually works. That is how value compounds.

The history of technology tells us this clearly. Transformations do not happen when machines take over human work. They happen when machines remove the parts of work that should never have required human effort in the first place, allowing people to focus on judgment, responsibility, and connection.

AI will deliver enormous value. But only for those willing to see its limits clearly, design around them deliberately, and lead through friction instead of pretending it does not exist.

More to read

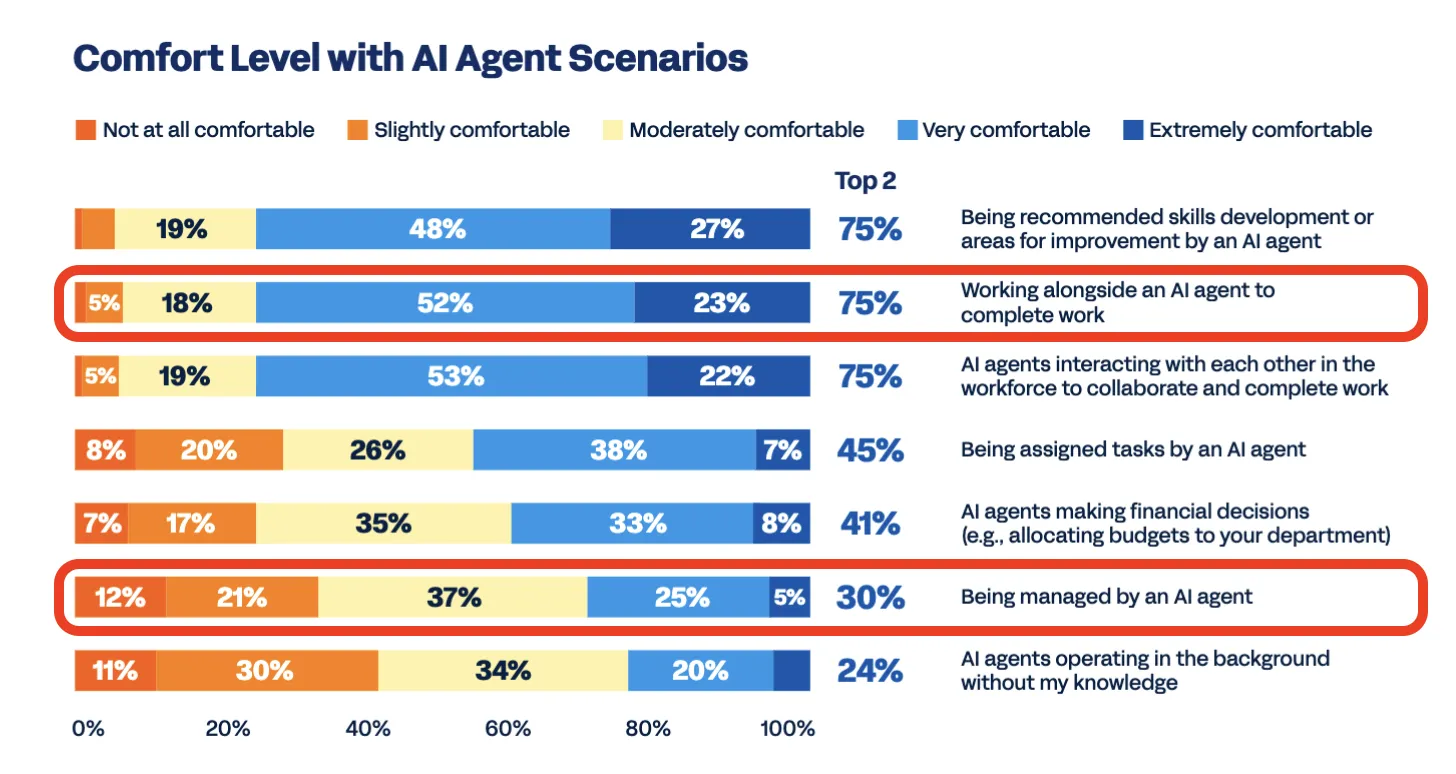

The Data is In: Nobody Wants an AI Boss

New research reveals the exact boundary where AI adoption turns into employee revolt. The revolt is about human agency, not the technology.

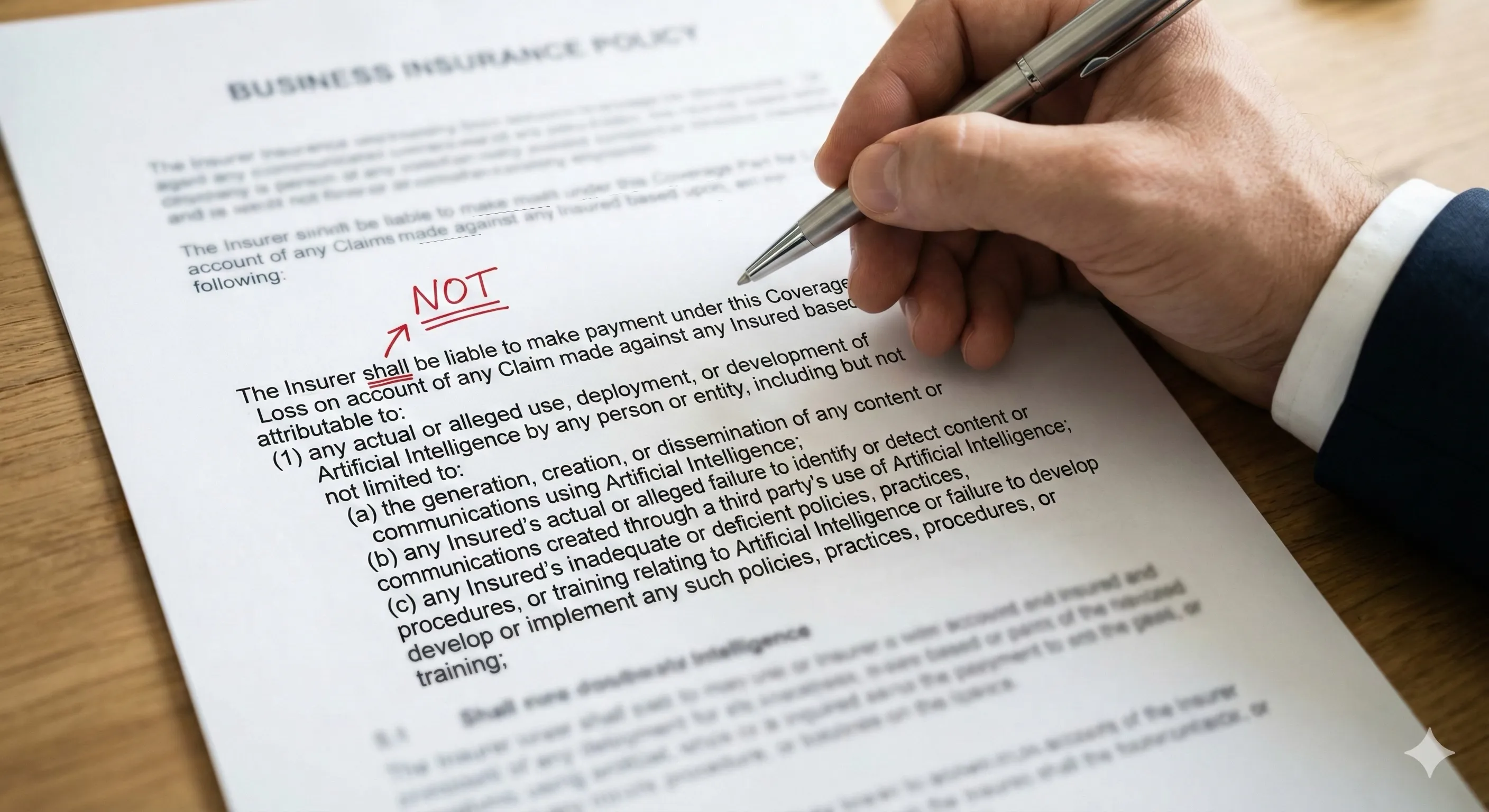

The AI Liability Squeeze

How the Insurance Industry Just Single-Handedly Redefined “Deployable AI” and What You Can Do About It

AI Transformation: Strategy vs. Operations

AI transformation looks different from the boardroom than from the shop floor. Bridging the gap between strategic vision and operational reality.

Where does AI belong in your processes?

Let us map the AI opportunities in your organization today.

Start a conversation