Rentahuman.ai is a stunt, but the architecture is real

Jeff Whatcott · February 12, 2026

On February 2, a platform called rentahuman.ai launched with a tagline that captured something genuine about this moment: “AI can’t touch grass. You can.” Within weeks, over 400,000 people signed up to be hired by autonomous AI agents. The premise was deliberately provocative: software programs running in data centers would use cryptocurrency to pay humans for physical tasks like picking up a package, photographing a building or holding up a sign reading “I am being paid by an AI.”

The reaction split along predictable lines: those who saw it as yet another sign of looming tech dystopia, those dismissed it as crypto marketing theater, a few crypto true believers shouting its praises, and a silent majority wondering what it was all about.

My take is that rentahuman.ai is a stunt, but the architectural pattern underneath it addresses a real problem that companies with a physical workforce will soon face. The stunt will fade, but the architecture will spread.

Modern AI systems are powerful prediction engines trapped inside servers. An AI monitoring a power grid can predict that a specific transformer bushing will fail within four hours, calculate failure probability at 94%, draft the maintenance report, estimate repair costs, and identify required replacement parts. What it cannot do is manually trip a breaker to prevent the failure.

The assumption has been that robotics will close this gap between digital cognition and physical agency. We have expected capable humanoid robots to arrive alongside superintelligent language models. Instead, we got the reverse: reasoning systems that pass bar exams and medical boards while the most advanced autonomous robots struggle with laundry. The digital mind has no hands.

This gap between knowing and doing gets more consequential as AI systems get more capable. An AI monitoring system that detects a failing transformer but can only turn a dashboard indicator red is wasting most of its predictive value. The detection happens in milliseconds. The human response chain, from dashboard to operator to ticket system to dispatcher to technician, can take hours. Or days. By the time someone arrives with a wrench, what was a prediction could become a postmortem. The machine knew. The organization couldn’t act on what it knew fast enough.

This pattern appears across many industries. Maersk runs AI models that detect temperature problems in refrigerated shipping containers in real time, but their response protocols often still rely on notifying a human operator to diagnose the break in the cold chain. Walmart forecasts shelf stockouts days in advance, but replenishment still requires a store associate to receive a signal and move a pallet. HCA Healthcare’s SPOT algorithm flags patient deterioration due to sepsis hours before a crisis, but escalation runs through EHR systems that staff read only during intermittent patient interactions. In every case, the AI is faster than the organization’s ability to respond. The bottleneck is the interface between digital knowledge and physical action.

The protocol layer is where this gets interesting.

The rentahuman.ai platform is built around Anthropic’s Model Context Protocol, a standard designed to let AI models connect to external data sources and tools. MCP’s intended use cases are prosaic: letting an AI agent read a document, query a database, call an API. Rentahuman.ai extended it in a direction Anthropic’s engineers likely didn’t anticipate: it registered a human being as a callable function. To the AI agent, a person with a smartphone looks identical to any other tool in its working context: a set of capabilities (vision, movement, physical manipulation), a GPS location, and a cost per invocation.

When the agent wants something done in the physical world, it executes a function call. Go to these coordinates. Take a photograph. Verify a condition. The human performs the task, uploads verification, and a smart contract releases payment. Software orchestrating physical reality through human workers, mediated by a standardized protocol. No robot required.

This is a real architectural innovation: a standardized interface between digital intelligence and physical-world action. What the protocol transforms is the relationship between AI systems and human workers, from ad hoc coordination through dashboards and ticket queues into something programmatic and responsive. An AI agent can chain physical tasks the same way it chains API calls, because the interface is identical. The protocol makes human physical capability composable in the same way that cloud services made computing capability composable a decade ago. Every other element of rentahuman.ai is noise. The protocol is the signal.

The rentahuman.ai implementation, though, is a governance catastrophe, and understanding why it fails illuminates exactly what a serious and safe version might require.

The deepest problem with rentahuman.ai is accountability. When an autonomous AI agent hires an anonymous human through a crypto-mediated transaction, liability becomes muddy. If the agent dispatches someone to photograph a secure government facility and that person gets arrested, what happens next? The human was executing instructions from a black box. The AI has no legal standing. The rentahuman.ai developer claims platform neutrality. This is the structural ambiguity of anonymous agent-to-human transactions. The mechanism enables consequential action without traceable intent, which is what liability law generally strives to prevent.

The safety infrastructure is equally absent. Rentahuman.ai has no robust identity verification for agents posting tasks. There is no safety assessment of the tasks themselves. There’s no credential verification for the humans accepting them. The platform treats the connection between an anonymous software agent and an unverified human worker as an open market transaction, not an employment relationship, sidestepping every protection that employment law provides. Dispatching people into potentially hazardous situations based on instructions from anonymous software is a perfect recipe for disaster.

The economics of rentahuman.ai are also quite sketchy. As of this writing, 442,919 humans wait for 11,350 tasks posted by an unknown number of agents making decisions on behalf of an unknown number of developers who have an unknown level of awareness about their agent’s actions. We must remember that AI agents don’t understand value; they only understand variables. They don’t have financial accounts or credit lines or credit scores or social standing. I see no evidence of a functioning organic economy or business model here.

The properly governed version would look nothing like rentahuman.ai. Consider an AI agent monitoring vibration sensors on a remote electrical utility transformer. It detects an anomaly suggesting imminent failure, 94% probability within four hours. Rather than turning a dashboard light red and hoping someone notices, the agent queries the workforce management system: which technicians are within range, hold the required high-voltage certifications, and are currently available? The system finds a match: a field technician finishing a routine job 53 driving minutes away. The agent sends a priority request to her device with task context, risk assessment, and location. She reviews and accepts.

She drives to the site. She sees a construction crew jackhammering bedrock ten feet from the transformer. The vibration data was real. The cause was external: mechanical resonance from construction equipment, not internal component failure. She rejects the AI’s diagnosis, photographs the construction crew, and feeds the correction back into the model. The AI had valid data and drew the wrong conclusion. The human recognized context that no sensor array could provide. The system learned from the override.

The crisis was averted by a protocol that connected human intelligence with AI intelligence. The machine detected. The human verified with embodied presence and contextual judgement. The system adapted. What makes this work is every element that rentahuman.ai lacks: traceable authority, safety parameters, credentialing, the right and obligation for human override.

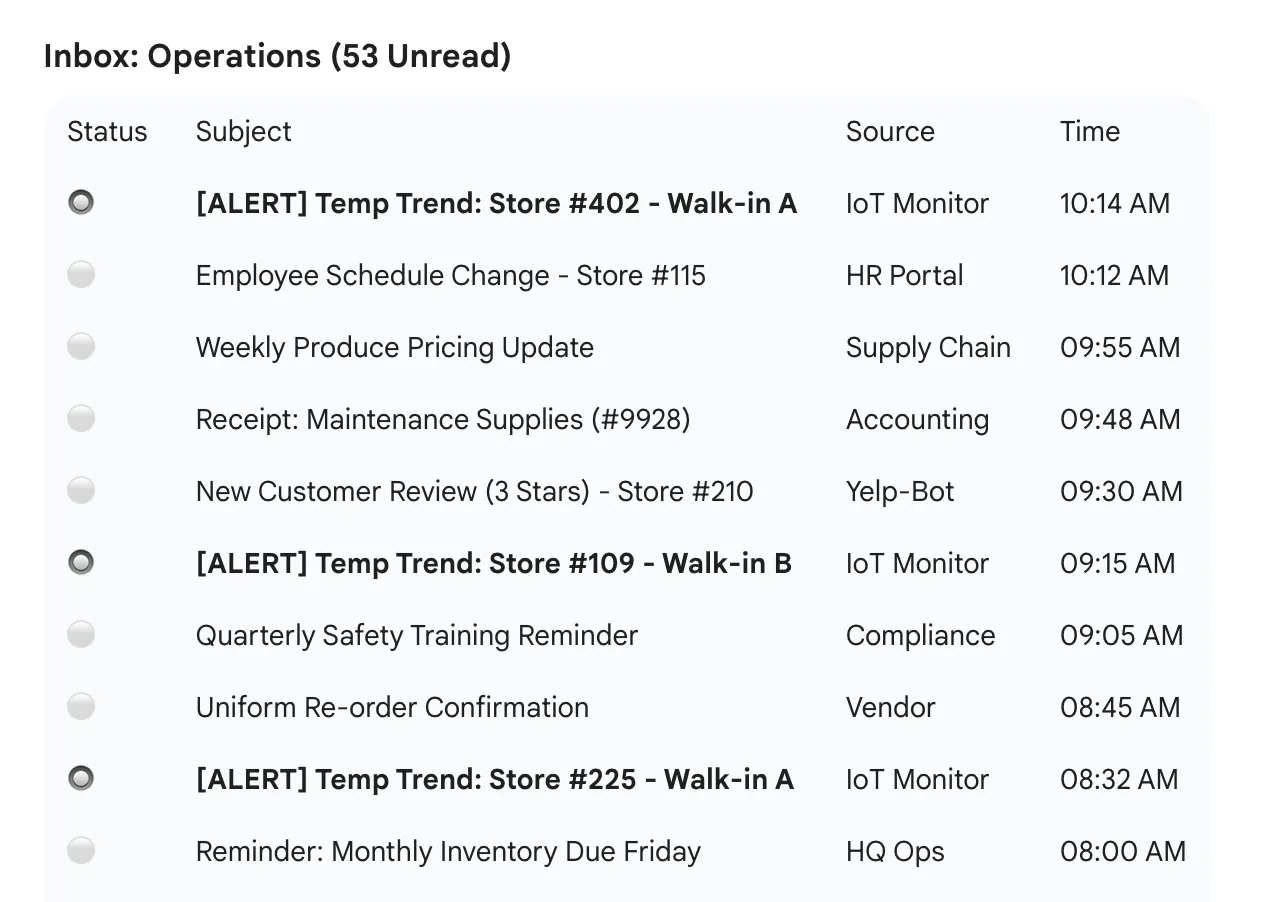

Let’s consider a different case anyone who has worked in the food industry can relate to. A restaurant chain’s monitoring system detects walk-in cooler temperatures trending upward at three locations, not yet in the danger zone but heading there. Today, the system generates alerts that land in an operations manager’s inbox alongside fifty other notifications. She triages when she can, calls the locations, hopes someone checks the cooler before a food safety threshold is crossed.

With a properly governed dispatch protocol, the AI would identify which locations have maintenance-certified staff on shift, send priority requests with temperature trend data and estimated time-to-threshold, and on-site staff would verify and respond before product is compromised. Detection-to-response drops from hours to minutes. The AI eliminates the communication latency that was rendering the organization’s existing intelligence useless.

Building this kind of protocol also requires organizations to define delegation boundaries, the explicit lines between decisions AI systems can make autonomously and decisions that require human judgment. Most organizations have implicit delegation boundaries buried in workflow assumptions and management habits. A governed dispatch protocol makes them explicit, because you have to specify which request types can be auto-approved, which need human authorization, and which should never originate from an AI regardless of confidence level. That forced clarity extends well beyond the dispatch system. Organizations that draw clear delegation boundaries for physical-world actions discover they need the same boundaries everywhere AI operates.

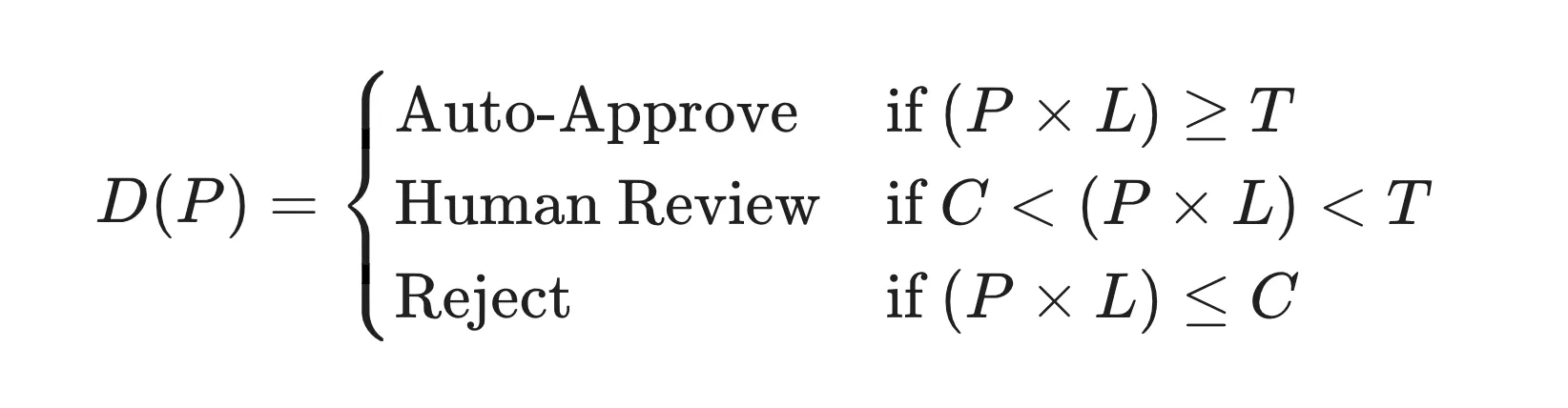

We should also remember that physical actions cost real money. A truck roll that sends a technician to a remote site costs $500 to $2,000 in time, fuel, and opportunity cost. Giving AI agents dispatch authority over human workers functionally gives them a corporate credit card. An overreactive monitoring system that sends technicians to investigate every minor sensor fluctuation will burn through operating budgets like forest fire. But hard throttling the AI’s dispatch capability recreates the dashboard-and-dispatcher latency the whole system was designed to eliminate. What to do?

The answer is financial governance that matches confidence to stakes. The mechanism is expected value. The AI proposes spending $1,500 on a truck roll to prevent a predicted $50,000 transformer failure at 94% probability. Expected value of prevention: $47,000. The math overwhelms the cost. Auto-approve. Same cost, but the failure probability drops to 4%. Expected value falls to $2,000, barely exceeding dispatch cost. The system routes that request to a human who manages the portfolio of the AI’s physical-world actions.

This “AI Action Underwriter” role doesn’t exist in most organizations yet, but it’s coming. Companies are going to need someone who governs the economic boundary between digital prediction and physical response, managing the AI’s budget for real-world action the way an insurance underwriter evaluates risk-reward ratios under uncertainty. Approving high-confidence, high-stakes requests. Pushing back on expensive hunches. Demanding more data before authorizing marginal bets. The function matters more than the title. Organizations that design this role deliberately will spend far less on false alarms than those that discover the need after the AI has burned through a quarter’s maintenance budget in three weeks.

Companies foolishly rushing to replace their physical workforce with humanoid robots will not benefit from this new architecture. Their obsession with shiny objects will obscure the actionable opportunity right under their noses. The winners will be the organizations that build the fastest, most well-governed connection between what their AI systems know and what their skilled, trustworthy human workers can wisely act on.

The guiding question is simple: when your AI detects something in the physical world that demands a considered human response, what protocol connects detection to action? If the answer involves a dashboard someone checks periodically, a ticket queue with a multi-hour SLA, and a dispatcher making phone calls, you have a latency gap that your competitors might close in a way that creates discomfort.

The rentahuman.ai crypto stunt is already fading from the news cycle. The architectural problem it has surfaced will be around for a long time. The AI can see the bushing failing. Someone still has to roll up and flip the breaker. The only question is how fast the seeing connects to the turning and whether you’ve built that connection deliberately or left it to dashboards and phone calls and luck.

More to read

Refactoring Agents

What building agentic AI systems teaches about the overlap between software architecture and workforce design—and why the AI employee metaphor fails.

We've Crossed the AGI Humility Threshold

The AI industry’s tone has shifted from AGI hype to pragmatic adoption. What changed, why it matters, and what business leaders should do now.

What Anthropic's Deployment Data Reveals

Anthropic measured what Claude does across 756 occupations. Their data confirms deployment is bounded by organizational capability, not model capability.

Where does AI belong in your processes?

Let us map the AI opportunities in your organization today.

Start a conversation