We've Crossed the AGI Humility Threshold

Jeff Whatcott · December 17, 2025

Karpathy, Dettmers, and Sutskever

Ilya Sutskever and Andrej Karpathy have both walked back the “scaling to AGI” thesis in recent weeks. Not hedged it. Not qualified it. Walked it back.

“From 2020 to 2025, it was the age of scaling. Now the scale is so big. Is the belief really, ‘Oh, it’s so big, but if you had 100x more, everything would be so different?’ I don’t think that’s true. So it’s back to the age of research again, just with big computers.”

“The industry is making too big of a jump and is trying to pretend like this is amazing, and it’s not. It’s slop.”

These aren’t two random AI skeptics. Sutskever co-founded OpenAI and led the technical team that built GPT. Karpathy founded OpenAI’s research and led Tesla’s Autopilot vision for five years. They built the scaling paradigm that has dominated the race to artificial general intelligence (AGI) in recent years. When the original architects start resetting expectations, it’s time to pay attention.

Sutskever is saying that adding more GPUs is never going to deliver what has been accepted as imminent in elite Silicon Valley circles since 2022—the same year he led a party chant of “feel the AGI!”. He essentially says that superscaling pre-training and reinforcement learning has unexpectedly made AI models narrow: the opposite of the “general” in artificial general intelligence. He offers a fun analogy: Two students study competitive programming. The first practices 10,000 hours, memorizes every technique. The second practices 100 hours, does well. Which has the better career? The second. “The models are much more like the first student, but even more.”

Karpathy reports the same phenomenon through recent experience with a novel vibe-coding project that needed more architectural judgment than pattern completion. He discovered that the frontier coding models were unhelpful because “they’re not very good at code that has never been written before, which is what we’re trying to achieve when we’re building these models.”

The Collision with Physical Reality

Why is this happening now? Why has the curve flattened?

While Sutskever and Karpathy observe the symptoms, AI researcher Tim Dettmers recently diagnosed the disease: Computation is physical.

For the last few years, we have treated scaling laws as abstract mathematical guarantees. Dettmers argues that we have now crashed into the physical limits of hardware. He points out that while transistors (computation) get smaller and cheaper, moving data to them (memory and bandwidth) does not scale at the same rate.

We have entered a phase where “linear progress needs exponential resources.” In the early days of GPT, linear investment yielded exponential capability jumps. Now, the equation has inverted. We are paying exponential costs in energy, memory movement, and data center complexity just to eke out linear, incremental gains.

This explains the shift in tone from the pioneers. The idea space of AGI has collided with the physical reality of computation. Throwing more compute at the problem isn’t just yielding diminishing returns; it is hitting a hard ceiling where the models become memory-bound and economically unviable before they become superintelligent.

From “Feel the AGI” to “If the Ideas Are Correct”

This collision has fundamentally altered the language Sutskever and Karpathy use as they discuss the future. Here’s Sutskever talking about his approach at Safe Superintelligence, his new company:

“If the ideas turn out to be correct—these ideas that we discussed around understanding generalization—then I think we will have something worthy. Will they turn out to be correct? We are doing research.”

When Karpathy discusses timelines, his tone is equally contrite:

“I feel like the problems are tractable, they’re surmountable, but they’re still difficult. If I just average it out, it just feels like a decade to me.”

What was once the confidence of inevitability has become “If the ideas turn out to be correct,” and “It just feels like.” This is the language of faith, hope, and unknown unknowns. It is the language that relies on a Deus Ex Machina—whether it be a secret Q* algorithm or a Quantum Computing breakthrough—to save the curve. It is not the language of clear lines of sight, scaling laws, extrapolated curves, and convergence. If they could speak in such language, they absolutely would. They don’t, because their integrity—and the physical reality of the hardware—will not let them.

The Era of Careful, Pragmatic Adoption

This is a substantial reset that may pop the AI platform valuation bubble. But the inferencing chips will continue humming because they’re already delivering value. Call it merely useful AI if you want—it’s useful enough that early adopters are paying surprising sums for it.

This marks the end of the “Feel the AGI” party and the beginning of a sober morning after. Dettmers describes this next phase not as the “Singularity,” but as “Economic Diffusion.” We are transitioning from a period of exponential expectations to one of careful, pragmatic adoption.

The competitive advantage isn’t in the model training run anymore—those are increasingly commoditized. The advantage is in the implementation. It’s in the integration architecture: how you design boundaries between human judgment and machine processing, how you make verification cheap enough to enable delegation, how you govern high-stakes decisions where errors compound. Organizations that master this diffusion now extract substantially more value from current AI systems and are positioned to absorb capability jumps if and when they occur.

Some Assembly Required

Sutskever and Karpathy’s reset also revealed something unexpected: they’re saying that even if AGI arrives, it will require the same pragmatic adoption patterns as today’s AI.

Sutskever’s vision of superintelligence is not an oracle that arrives with answers. He describes:

“a superintelligent 15-year-old that’s very eager to go. They don’t know very much at all, a great student, very eager. You go and be a programmer, you go and be a doctor, go and learn.”

The deployment itself, he suggests, will require care:

“it will involve some kind of a learning trial-and-error period. It’s a process, as opposed to you dropping the finished thing.”

This is a different picture of AGI than what was discussed just months ago. Not a god in a box. Not an alien superintelligence that arrives with solutions to all our problems. Dettmers goes further, calling the “Oxford-style” philosophical idea of a self-improving superintelligence a “fantasy” because it ignores the resource constraints of the physical world.

We are left with a capable learner that still requires onboarding, supervision, governance, and integration into organizational reality. Useful? Yes. But some assembly required.

The Hitchhiker’s Guide to the Galaxy offered a parable for this. Deep Thought, the great computer, computed for 7.5 million years and produced the Answer to the Ultimate Question of Life, the Universe, and Everything. The answer was 42. It was technically correct, but utterly useless without interpretation, context, and judgment about what to do with it.

Even a system that “has the answer” may not have the right question and may not understand why the answer matters in your specific context. Sutskever’s “continual learner” framing suggests any future AGI will be more like a capable new hire than an oracle. It will learn fast—something current AI cannot do—but will still need clear boundaries defining what it should handle autonomously versus where humans provide judgment.

Designing for the Messy Reality

We are living through a moment of uncharacteristic humility from the people who built the AI paradigm, backed by the hard physics of computation. It is now clear that the next three years will not look like the last three. Organizations have a window to properly architect boundaries between human and AI work without worrying that imminent AGI will make it all moot.

The question for executives is not whether to adopt AI. The models are useful and will only get more useful. The question is whether you’re designing for the messy reality the AI pioneers describe—or for a frictionless automation future that even the people building these systems don’t expect.

The confidence gap Sutskever and Karpathy have revealed, and the physical walls Dettmers has identified, tell us which scenario to design for first. Build robust boundaries between human and AI work. Develop governance for high-stakes decisions. Extract maximum value from the AI that exists.

That architecture works whether AGI arrives in five years, fifty, or never. So do that.

More to read

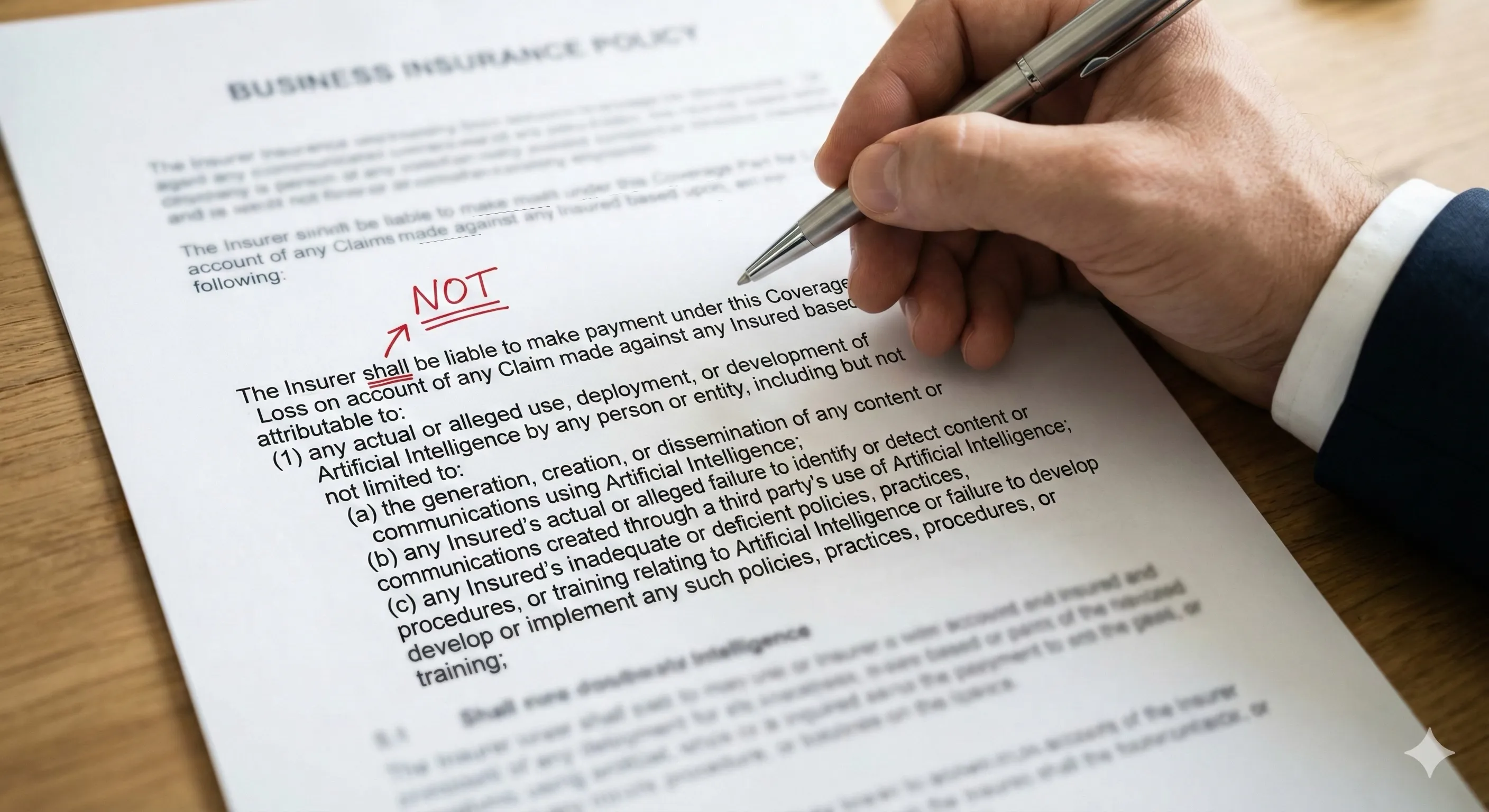

The AI Liability Squeeze

How the Insurance Industry Just Single-Handedly Redefined “Deployable AI” and What You Can Do About It

8 Hours with AI and College Football Bias

A BYU fan tests five AI systems on college football rankings. The experiment reveals how bias shapes AI outputs—even when objectivity is the goal.

The Four Actors in Hybrid AI Architecture

A framework for hybrid AI architecture: how Humans, Hardware, Software, and AI Agents must each be assigned to the right tasks for AI to succeed.

Where does AI belong in your processes?

Let us map the AI opportunities in your organization today.

Start a conversation