The Four Actors in Hybrid AI Architecture

Timothy Robinson · March 20, 2026

On January 12th of this year, Anthropic—the company behind the popular chatbot, Claude.ai—launched a shot over the bow of the American business community with the release of a new desktop app for Pro subscribers called Cowork. Cowork came about when the engineers at Anthropic realized that more and more people were using Anthropic’s “Code” model, and not Claude itself, to do things that they used to use the chatbot to do. Things like vacation planning, email cleanup, even writing documents. Code was simply better at it. And Anthropic’s leadership realized that more people would do the same if they weren’t put off by the name, “Code,” and by the command-line interface.

Cowork has several key advantages over chatbots like Claude, Grok, or ChatGPT. The most significant is that, like “Code,” it was built to be “agentic.” That means if you give it a task, it gets to work with persistent, autonomous execution. You can also give it access to the files on your computer and have it do work there, rather than having to upload them. That seems trivial, but that combined with its agentic structure means that you can ask Cowork to build things for you and it can assemble the tools it needs on the fly to get it done (like terminal access, Python or SQL libraries, or reference to other files). All without having to chat about it. And it can write code, too, if deterministic software would be a better approach to accomplishing your task.

It actually spawns many copies of itself to do different things (sometimes called “skills”) like breaking a job down into tasks or making a plan or reading a PDF or writing a script for a repeated function or opening a spreadsheet and launching a bit of code to do the math to analyze it. It’s called “orchestration” and it represents a step-change in the way work can be refactored. Engineers have been doing it for over a year now, but Cowork allowed product managers and financial analysts, and everyone else, to catch up.

About a month later, OpenAI shipped their own desktop app for the latest ChatGPT engine (called Codex). And about a week after that both companies launched upgraded versions of their most capable models (Claude’s Opus 4.6 and ChatGPT’s Codex 5.3), literally within a few hours of each other pouring fuel on the fire. Right around the same time, news stories started making that rounds that the engineers at these companies weren’t writing code anymore. The models are better at it now. They just tell the models what to build and then go for a run and come back to make edits and corrections before moving on to the next section. Boris Cherny, head of Claude Code, famously said, “I have not edited a single line of code…by hand since November…” Anthropic’s head of product, Scott White, described the shift as “almost transitioning into vibe working.”

By the end of the following week all this had triggered a $285 billion selloff across enterprise stocks for traditional software companies like Salesforce, Atlassian, and ServiceNow as investors suddenly grasped what practitioners had been discovering for months: the old boundaries between “thinking” and “executing” in software are beginning to dissolve, and a new hybrid AI architecture is taking shape.

Hybrid Systems: Deterministic Software Meets Probabilistic AI

What these teams are building, whether they use the word or not, are hybrid systems. Systems where probabilistic intelligence (AI) and deterministic execution (software) work together in a carefully structured partnership. The Large Language Model (like ChatGPT) handles what software cannot: ambiguity, semantic inference, unstructured input, natural language interpretation. Traditional software code handles what LLMs cannot: exact arithmetic, reproducible logic, security enforcement, auditable state management. And hovering over both is a third presence that neither tool can replace: a human being who decides what should happen, who is accountable when it goes wrong, and who understands the stakes in a way that no statistical model or rule engine ever will.

The pattern shows up in small moments and large ones. A developer prompts Claude to read an angry customer email and propose a structured response: {"Action": "Refund", "Amount": "$50"}. Deterministic code then validates that proposal against hardcoded business rules: is the item within the 30-day return window? Is the amount within the auto-approval threshold? The deterministic code, not the AI, executes the API call. The AI proposes. The code validates. The human designed the guardrails and reviews the exceptions. Three actors, each performing the operation their architecture is designed for.

Or a financial analyst asks ChatGPT’s Codex engine to pull quarterly data from six different internal business systems, each using different formats and naming conventions, and synthesize a comparison. The AI handles the semantic translation that would have required weeks of custom parsing: it understands that “Rev,” “Revenue,” and “Total Sales” all refer to the same concept across different source systems. Deterministic code runs the actual calculations and checks the numbers against known constraints. The analyst reviews the output, applies contextual judgment about market conditions, and presents the findings. The AI read. The code computed. The human judged.

Seampoint recently built a Purchase Order processing system for a client that uses an LLM API key to automatically turn emailed PDFs purchase orders from customers (all in radically different formats) into a standardized CSV file that can be fed directly into an ERP system that will prioritize and batch the manufacturing workflow on the factory floor. The client’s sales team used to spend half their time keying in the values required by the ERP form. The ERP is traditional software, the LLM API key turns the messy data from the PDF purchase order into regimented columns it can understand. And the humans verify that the values are correct before proceeding.

As I mentioned before, this three-way partnership is not new in theory. Acceldata’s Mahesh Kumar described the same tension in mid-2025, arguing that the modern enterprise sits at the convergence of “two worlds”: deterministic systems built for consistency and control, and probabilistic reasoning systems built for ambiguity and interpretation. Chris King at New Math Data made a similar case, arguing that hybrid approaches combining deterministic code with AI-driven reasoning yield systems that are both reliable and adaptive, with the deterministic layer providing trust, safety, and correctness while the LLM (or AI) layer provides intelligence, adaptability, and reach. What changed in February 2026 is that the tooling made this hybrid architecture easy enough for non-specialists to stumble into. And stumble they did, often without understanding why some of their experiments worked beautifully while others failed.

The failures have a common root: misidentifying which type of “actor” is doing which type of work.

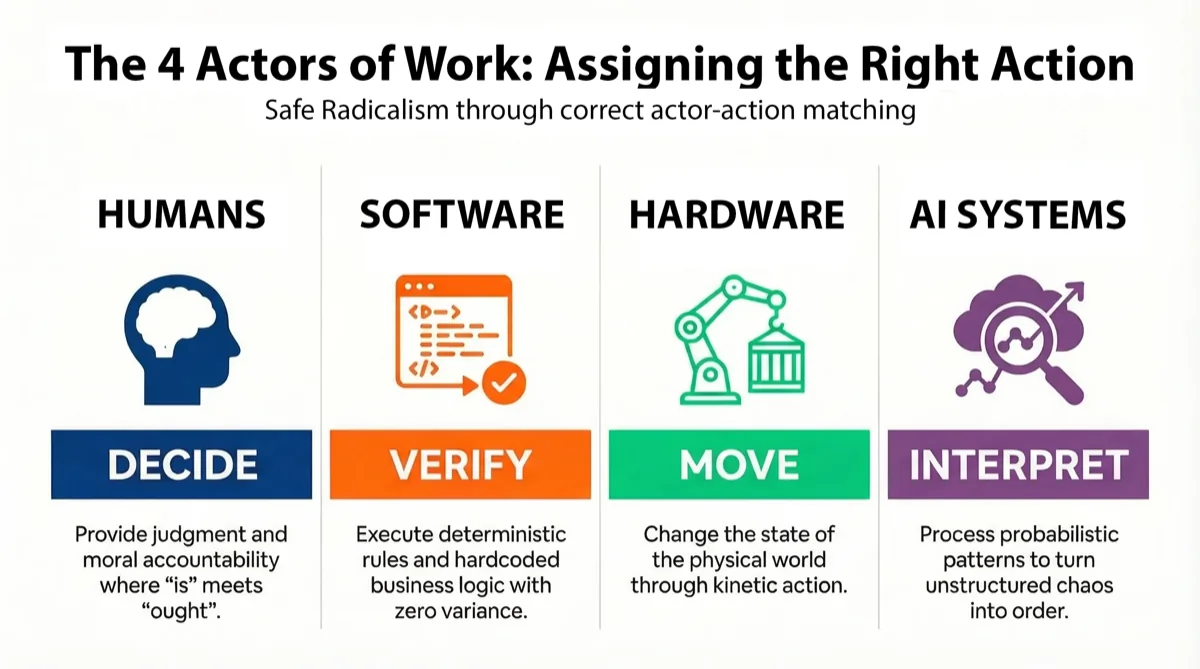

The Four Actors

In the Language of Work human-AI collaboration framework we have developed at Seampoint, work is not just a vague cloud of activity. It is a series of precise assignments: a particular actor performs a particular operation on a particular object. And choosing the right actor (or worker) matters. Four distinct types of workers perform work in every organization. Getting the hybrid architecture right requires understanding all four. Each has capabilities the others cannot replicate and limitations the others do not share.

Humans are the first of the four actors, and the ones we are most familiar with. That said, it has never been more critical to think about our unique capabilities and also our limitations. Humans are uniquely qualified to bring understanding and judgment when faced with operational and even ethical ambiguity. We are the only business actors capable of what can be called true agency: bearing moral and legal accountability and distinguishing “is” from “ought.” We humans bring authentic emotional connections to other humans that builds trust and sustains working relationships. We are also uniquely capable of improvising in situations that fall outside any historical dataset—the scenarios nobody anticipated and no training data contains. But human architecture has hard constraints. Our attention degrades after roughly thirty minutes of repetitive monitoring, a phenomenon called vigilance decrement that no training program or incentive structure can override. Our working memory handles about seven items before it starts dropping things. We carry a biological baseline error rate of 2-5% on even simple tasks. We are reconstructive learners, not recorders, meaning we often improvise new ways of expressing even thoughts and ideas we know well each time we share them rather than repeating them the same way verbatim. We’re inconsistent. We have good days and bad days, and we don’t have bad days because we lack discipline, we have bad days because we’re biological.

Software systems, on the other hand, are deterministic rule executors. These are databases, APIs, and ERP systems. They are the incorruptible backstop of any workflow. They do exactly what they are told, every single time, with zero performance variance. They don’t make stuff up and they don’t have tired Friday afternoons. They excel at deterministic filtering—checking whether a piece of data perfectly matches an explicit rule. Is this refund under $50? Is this user authorized? Does this input match the schema? But they crash on ambiguity. They process symbols, not meaning. Software can route a message based on a keyword without any understanding of what the message says. When a vendor changes a column header from Part_Num to Part#, Software doesn’t adapt. It throws an exception and halts.

Hardware is a class of mechanical, kinetic physical systems. Things like pumps, switches, valves, motors, cameras, sensors, and even whole mechanical systems like vehicles and manufacturing equipment. It is the only business actor besides humans that can change the state of the physical world (neither Software nor AI Agents can do this alone). Hardware machines perform repetitive kinetic tasks with precision and duration that would destroy human joints and muscles. They can operate in extreme environments including high heat, radiation, or toxic atmospheres where biological workers cannot survive. But they are physics-bound. Unlike Software and AI Agents, which can be instantiated anywhere there’s compute, Hardware must be physically present. And while they are strong, they are often unable to handle unstructured physical spaces. A welding robot that works flawlessly on a flat, pristine factory floor can be completely disabled by an unexpected obstacle like a misplaced bolt or a piece of trash.

AI Agents are the fourth actor and the newest one on the scene. These are chatbots like ChatGPT and generative tools like image and video generators, but also including other types of Machine Learning systems like convolutional neural networks that power AI vision systems. AI Agents are probabilistic pattern processors that recognize and extend patterns. If humans process contextual meaning, AI Agents process informational entropy. They consume chaos, unstructured text, messy datasets, ambiguous inputs, and synthesize order. They detect correlations across millions of examples that would be invisible to human perception, draft plans and content based on statistical likelihood, and operate at volumes no human team can approach. Like Software, they don’t have a fatigue threshold. Like Hardware, they can operate at scale and process ten thousand documents an hour without degradation. But confabulation (making stuff up—sometimes called “hallucination”) is not just an unfortunate side-effect in these systems, it is actually a structural feature of how they generate their outputs. They produce likelihoods, not truth; they literally don’t know the difference. And they don’t have any sense of the emotional resonance of the information they process. They treat a cancer diagnosis with the same statistical detachment as a movie recommendation. Plus, they can never be held accountable. They can only recommend. They can draft. They can score. They cannot decide and be held accountable for their decisions. Only Humans can do that.

When people say “AI systems” they are usually referring to only one of these four actors, the Prediction Machine, ignoring the others even though, more and more, the broader systems incorporating AI have Hardware and/or Software components and Human oversight. When they say “technology,” they are sometimes collapsing three architecturally distinct actors into a single word. This imprecision is where business systems break down.

When we say that the “seam,” or the point of transfer of work from one of these actors to another, needs to be very intentionally designed in order to be effective, we mean that someone has to carefully think through exactly what type of task is required and whether that type of task is optimized to take advantage of the advantages of the particular actor being assigned the task.

Assigning the Right Action to the Right Actor

The insight that makes hybrid architectures work so well is that each actor’s weakness is another actor’s strength. And nowhere is this more visible than in how the new tools handle the boundary between probabilistic inference and deterministic execution. Consider what we call the “Thin Software” pattern.

Traditional enterprise software, what we call Thick Code, tries to tame a messy and unstructured world using rigid, deterministic programming logic. When data arrives in an unexpected format, the pipeline breaks. An engineer writes a new custom parser. Weeks pass. The next format variation arrives, and the cycle repeats. Organizations end up spending up to 80% of IT budgets on integration and maintenance, generating zero strategic value. This is the Integration Tax, and it’s been bleeding enterprises dry for decades.

Thin Software inverts the architecture. Instead of writing brittle code to understand data, you write reliable code to carry data to a Prediction Machine that can interpret it. The AI does the reading. The code does the math. When a supplier changes their invoice layout, the AI observes the new format, understands that a “Total” is still a “Total” regardless of where it sits on the page, and extracts the correct data. The pipeline heals itself. Engineering effort shifts from writing parsers to architecting verification.

This is not an abstract concept. JPMorgan’s COiN platform applies this pattern at scale. Software handles rule-based checks for money laundering, stress testing, and regulatory reporting. Layered on top, AI Agents analyze contracts, extracts over 150 attributes from commercial lending and M&A agreements, and flags anomalies for Human review. This system eliminated 360,000 hours of manual labor annually for JPMorgan, work that previously consumed lawyers and loan officers. But COiN does not replace the deterministic compliance framework. It augments it. The AI flags and extracts. The rules verify. Humans validate. Three actors, each doing what their architecture does best.

Walmart runs the same hybrid at a different scale. Software ERP systems execute replenishment orders and update stock levels based on fixed business rules. Probabilistic AI Agents decide what to replenish, when, and where, using temporal fusion transformers that weight weather forecasts, local events, promotional schedules, and online browsing behavior to generate demand predictions no rule-based system could produce. When a heatwave is predicted, water and fans are already en route before stores feel the demand. The rules ensure execution integrity. The AI ensures decision intelligence.

In healthcare, Mayo Clinic maintains deterministic treatment protocols while deploying probabilistic AI to interpret unstructured clinical data that traditional analytics cannot touch. Eighty percent of high-value healthcare data is locked inside clinical notes, pathology reports, and discharge summaries. Traditional software is entirely blind to this information. It can process an ICD-10 code or a blood pressure reading. It cannot read a physician’s narrative assessment of how a patient responded to first-line therapy. The AI Agents read the narrative. The Software enforces the protocol. The Human physician decides.

The implications for operational cost can be staggering. Consider prior authorization, the process by which hospitals seek insurance approval before delivering care. A manual prior authorization costs $10.97 per transaction. An electronic one costs $0.05. The bottleneck has always been semantic: someone has to read the unstructured clinical notes, find the qualitative evidence the payer requires, and match it to specific medical necessity guidelines. Traditional software cannot do this because the evidence lives in free text, not structured fields. A Prediction Machine LLM can. It reads the notes, finds the relevant clinical evidence, and drafts the authorization. Deterministic code (Software) validates the draft against payer requirements. A Human clinician reviews and signs. The three-actor pattern again: interpret, verify, decide.

The critical guardrail in healthcare is knowing where the AI must stop. The AI reads clinical notes and drafts authorizations. It never interfaces directly in any way with insulin pumps or ventilators, which are Hardware—the fourth type of actor. These physical machines are connected to actual patients with real consequences of error in the real world. They require deterministic, formally verified code before being put into motion. Mixing up which of the four actors should own which task in a clinical setting isn’t an efficiency problem. It’s a safety problem with potentially life-or-death outcomes.

In financial services, the same hybrid pattern now solves a different class of problems. Reconciliation, the continuous process of verifying that internal records match external reality across counterparties and bank statements, has traditionally depended on massive spreadsheet VLOOKUPs and custom fuzzy-matching algorithms. When systems don’t match exactly, trade settlements fail. With the industry moving to next-day settlement cycles, the time available to manually fix data discrepancies has been slashed. An LLM (AI Agent) can do something no Software can: understand that “JPMorgan Chase & Co,” “JPM,” and “JP Morgan” are the same entity, completing database joins on messy data that would choke a deterministic matcher. But the LLM doesn’t execute the trade. Code does.

Each of these organizations discovered the same design principle, whether or not they articulated it this way: you achieve the best outcomes when you assign the right action to the right actor. Let AI Agents interpret. Let Software verify. Let Humans decide.

We call this pattern “Safe Radicalism”: radical delegation to AI Agents for speed and flexibility, deterministic filters for consistency, human decision for accountability, and physical machines to move things around in the real world. And the organizations getting results follow it rigorously, even when they don’t call it by that name.

Here’s another critical insight: the object defines the action. It’s only possible to refactor a workflow in a way that leverages the relative capabilities and limitations of each actor when the workflow objective has been crisply defined. JPMorgan’s COiN doesn’t just “read contracts.” It extracts specific attributes from commercial lending agreements and flags compliance anomalies. That objective is what tells the AI which 150 attributes to look for. It’s what tells the deterministic layer which rules to check against and what tells human reviewers which anomalies deserve their attention. The prior authorization example is even more explicit. The objective—obtain payer approval by demonstrating medical necessity—makes the entire actor assignment fall out almost mechanically. The AI must find qualitative evidence in unstructured notes. The code must validate it against the payer’s specific guidelines. The clinician must sign, or intervene when the evidence is ambiguous. Each actor’s job description flows downstream from the workflow’s clearly defined purpose.

This matters because you cannot identify an edge case for review until you have defined the standard case. If the workflow objective for prior authorization is clear, then you know what a clean approval looks like: clinical notes contain the required evidence, it matches the payer’s criteria, the authorization goes through. Now you can see where judgment is needed. What if the notes describe a patient who partially meets the criteria? What if the clinical language is ambiguous about whether first-line therapy has truly “failed”? Those are the moments that require a human physician. But they only become visible once the standard path has been specified. Organizations that skip this step, that say “let’s use AI for customer service” without defining what a successful interaction requires, end up unable to scope any actor’s role. They can’t tell the AI what to optimize for, can’t tell the code what to verify, and can’t tell the human what exceptions to watch for. The refactoring collapses before it begins.

McKinsey’s 2025 survey of AI performance reinforces this architecture. The practice that most distinguished high-performing organizations, the 6% generating meaningful bottom-line impact, was having defined processes to determine how and when model outputs need human validation. Sixty-five percent of high performers reported this practice in place. Among all other respondents, the figure was 23%. High performers also redesigned workflows rather than bolting AI onto existing processes, with 55% reporting fundamental workflow redesign compared to 20% of others.

Mapping the Territory

With this framework in mind, let’s think about the implications for the new “vibe-work” described in the opening paragraphs of this article. The key difference is that now, the AI models themselves (i.e. Cowork, Codex, and the others) are not just doing the probabilistic inferences, they are actually writing the code for the deterministic tasks, as well. So the entity building the Software is changing. It used to be Humans and now, more and more, it’s the AI Agents, themselves. But the actual assignment of tasks is still being spread across the four actors, as before.

Take the Seampoint Purchase Order workflow I mentioned earlier. Cursor wrote the code (a collection of Python, JSON, and Regex scripts) using an AI agent (Claude’s Opus 4.6). The system relies upon an API call to ChatGPT’s Codex to extract and parse the data from the messy PDF into the clean columns required by the ERP system: PO_Number* | Company_Name* | Item_Number | Item_Description | Part_Number* | Unit_Metric | Quantity | Adjusted_Qty_LBS | Rounded_Qty* | Estimated_Price | Estimated_Tax | Estimated_Total_Order | Required_By_Date* | Ship_To_Address* | Terms | PO_Issued_By_Name | Notes | Validation_Notes (the values with the asterisks are required). The LLM also passes along a confidence rating.

Then multiple passes of deterministic code from Software code validate the values, flag any missing values, round the quantity ordered up to the nearest increment of 50, make conversions from kilograms to pounds or from foreign currencies to US Dollars (as necessary), and correlate part numbers and part descriptions with existing product catalogs. The Human sales person is still required to review the values before entering them into the ERP system since heavy Hardware will be be put in motion at significant cost to process the orders based on the order entry.

Despite having used AI to generate the workflow, it is still critical to know which actor is responsible for which task, so that the work being done can be validated, scaled, and troubleshot if and when things go wrong.

The Character Revolution

The question of which actor does what is not only a systems design question. It is increasingly a question about people.

When hybrid systems work well, they change what humans spend their time doing. The coordination overhead that consumes 57-60% of a knowledge worker’s day, the email triage, the status updates, the meeting scheduling, the document search, begins migrating to machines. The routine cognitive tasks that filled experienced workers’ days begin delegating to AI co-pilots. What remains concentrates around the work that plays to human strengths: judgment under ambiguity, relationship building, accountability for outcomes.

Joshua Gans, analyzing the 2025 Brynjolfsson, Chandar, and Chen study of AI-exposed occupations, observed something that challenges the simple “AI takes jobs” narrative. While employment for workers aged 22-25 declined 6% in AI-exposed jobs since late 2022, employment for older workers in those same occupations grew 6-9%. If AI were substituting for human labor, companies would not be simultaneously hiring experienced workers in the occupations where they’re cutting entry-level headcount. The data is more consistent with AI complementing human skills than replacing them, particularly the tacit knowledge and contextual judgment that comes from years of experience, information that is not freely available to any AI system.

The pattern makes sense through the Language of Work lens. AI Agents have access to codified knowledge, the kind of you get from textbooks and training programs that entry-level workers have been trained on. What the LLMs lack is the accumulated situational judgment that experienced workers carry. When AI absorbs the routine cognitive tasks that once filled a junior analyst’s day, the premium on what a senior analyst knows from having lived through market cycles, having built client relationships, having made consequential mistakes and learned from them, actually goes up. AI doesn’t replace the experienced human. It makes the experienced human’s irreplaceable qualities more valuable.

This has a painful second-order consequence. Entry-level positions are the mechanism through which workers acquire tacit knowledge. If AI reduces the number of those positions, the pipeline that produces experienced workers narrows at exactly the moment demand for experienced workers increases. Gans flagged this directly: careless businesses are going to run out of workers with experience. You might think they should keep hiring younger workers to build that pipeline, but two problems intervene. When AI is present, the experience may not be the same. And if one company invests in training a young worker, that worker’s skills benefit whichever employer hires them next. The costs are private; the gains are socialized. When that happens, as Gans noted, investment tends not to occur.

But this points to a deeper shift in what it means to be the human in the system. Adam Grant put it precisely in Hidden Potential: “If our cognitive skills are what separate us from animals, our character skills are what elevate us above machines. As more and more cognitive skills get automated, we’re in the midst of a character revolution.” The premium is shifting from what we can compute to who we are when computing isn’t enough. The capacity for genuine empathy when a patient is frightened. The integrity to deliver bad news to a client when it would be easier to soften it. The courage to override an AI recommendation when your gut and your experience tell you the model is wrong, even if you can’t articulate exactly why. These are character skills. They don’t show up on a benchmark. They show up in the quality of the decisions that only humans can make.

A truth the efficiency conversation obscures: many modern jobs are collections of tasks that ended up with humans not because humans find them meaningful or are particularly good at them, but because those tasks couldn’t be automated by the machines of the industrial and digital eras. They ended up with humans by default. Data entry clerks. Dashboard monitors. Script-reading call center agents. These jobs compensate for human weaknesses rather than leveraging human strengths, and we built elaborate structures, rotation schedules, break policies, error-checking protocols, to manage the mismatch.

AI creates an opportunity to change this. As high-delegation tasks migrate to machines, what remains with humans can be deliberately designed around judgment, empathy, creativity, and meaning-making. Not the leftover work that AI can’t do, but work that humans are uniquely suited to do well, and that they find worth doing.

This won’t happen automatically. Left to inertia, organizations will assign humans whatever tasks AI can’t handle, recreating the same accidental job design that produced dehumanizing work in the first place. But with intention, leaders can use this moment to redesign work around human flourishing. Roles built on relationships rather than repetition. Jobs defined by accountability rather than endurance. Work that plays to our irreducible strengths rather than compensating for our limitations.

The other three actors won’t decide whether that happens. We will.

Key Takeaway:

The hybrid AI architecture framework is deceptively simple. AI Agents interpret ambiguity. Software enforces rules. Hardware acts in the physical world. Humans bring judgment, accountability, and the wisdom to know which actor should handle which task. Every hybrid AI system that works follows this pattern. Every one that fails has confused the assignments.

More to read

8 Hours with AI and College Football Bias

A BYU fan tests five AI systems on college football rankings. The experiment reveals how bias shapes AI outputs—even when objectivity is the goal.

Stop Panning, Start Mining: AI Discipline

The Gold Rush rewarded infrastructure builders, not prospectors. AI success demands the same industrial discipline over speculative experimentation.

AI is Redefining Expertise

AI is compressing the gap between knowledge and judgment. How to manage the seam between the speed of AI and the irreplaceable wisdom of the SME.

Where does AI belong in your processes?

Let us map the AI opportunities in your organization today.

Start a conversation