Stop Panning, Start Mining: AI Discipline

Alan Berrey · February 24, 2026

The California Gold Rush officially began on January 24, 1848, when James Marshall discovered gold at Sutter’s Mill. By 1856, the initial rush had largely subsided. In those eight years, a distinct American phenomenon was born which has since become the ultimate case study in supply chain value extraction.

We’ve all heard the cliché: “In a gold rush, sell boots and shovels.” In the 1850s, supplying the dream was more lucrative than chasing it. While prospectors flocked to the West to pan for treasure, savvy entrepreneurs recognized that a flood of manpower required food, clothing, shelter, and equipment. Panning for gold was hit-or-miss; the need for boots was a certainty.

The economics were staggering. In 1852 San Francisco, a single egg cost the equivalent of $38 today, and a pair of boots could cost $3,800. Samuel Brannan was widely regarded as California’s first millionaire by cornering the market on mining supplies. Levi Strauss and James Folger built empires on denim and coffee. Meanwhile, the average miner’s daily take plummeted from $20 in 1848 to just $6 by 1852.

By 1856, the “surface gold,” consisting of the flakes and nuggets in the riverbeds, was gone. The remaining wealth sat deep underground. The lone prospector was replaced by “hyperscalers,” industrial behemoths with the capital to secure land rights and the machinery to move mountains. The Empire Mine, for example, was one of the richest mines in California. Miners extracted $30 billion in gold, but they needed to dig through two tons of rock for every ounce of gold collected.

The New Gold Rush: November 30, 2022

The launch of ChatGPT marked the start of the AI Gold Rush. The service amassed 100 million users in just 60 days, making it one of fastest-growing consumer applications in history. Just as in the 19th century, however, value in the AI ecosystem is concentrating among the enablers, the suppliers of boots and shovels. Companies like Nvidia, TSMC, and the cloud hyperscalers have captured disproportionate economic value, while most others looked on.

For the enterprise “prospector,” the Gold Rush analogy is breaking down. In the 1850s, the surface gold was real. In 2025 and 2026, the “easy gold” of corporate AI has proven surprisingly elusive. Most organizations are finding that their “pans” are coming up empty. According to researchers at MIT in 2025, “95% of enterprise GenAI pilots show zero measurable financial return.” (endnote #1) A PwC report in 2026 finds that “56% of … CEOs say AI has not yet increased revenue or reduced costs.” (endnote #2) Far too often a board approved the AI budget, the team launched a pilot, internal dashboards show high engagement, but the CFO shows zero or negative ROI.

Why Prospectors Are Failing: The Four Hidden Frictions

Why is the “easy gold” so hard to find? Our analysis identifies four structural reasons why AI initiatives come up empty.

Consequence of Error

There is a huge gap between what the technology is capable of performing and what the technology has the authority to perform. Just because an AI model can perform a task does not mean it should. Our research reveals a stark divide in the modern enterprise. While roughly 93% of tasks could be technically performed or accelerated by AI, only 27% are actually safe to deploy under current governance structures. This “Governance Discount” exists because technical exposure does not equal operational readiness.

Far too often, organizations ignore or underestimate the likelihood and consequence of error. They have been conditioned to think that software is deterministic. They believe that once a system is debugged and deployed, it will always produce reliable and correct answers. AI, however, is probabilistic, so errors are a mathematical certainty. The trick therefore for companies is to deploy AI where the cost of governing the process is low.

(Relevant article: The Hard Lessons of AI in the Call Center.)

Coordination Tax

The burden of directing and synchronizing action in the world of AI constitutes a tax on the technology. Based on our internal analysis, 31.5% of all work is coordination. This is the portion of tasks that aren’t the “core work” itself but the effort required to align, update, and verify that the work is being performed and performed correctly. When an organization implements AI, it is common for core work requirements to decrease and coordination requirements to increase.

When an organization introduces Agentic AI, this phenomenon can accelerate. The agent handles the low-level technical coordination like calling APIs and moving data. However, for the human, the coordination requirements go up significantly. The promise of agents is “I will go do these 50 things for you.” The reality for the human manager is different: “I now have 50 outputs I need to verify.”

Humans have limitations when coordinating the work of agents. We are biologically incapable of maintaining high-level attention for more than roughly 20 minutes of passive monitoring. As an agent scales the volume of work, the human’s “Coordination Tax” actually spikes. This happens because the cost of verifying a high-speed “agentic swarm” is often greater than the cost of just doing the work manually. If the agent makes a mistake in step 3 of a 50-step process, the coordination effort required to unpick the “hallucination debt” is massive.

Accountability and Presence

Doing work is one thing, but taking full responsibility for the work is another. This, it turns out, makes a huge difference. Just because an AI can write a contract does not mean it is authorized to sign it on behalf of the organization. If a machine is not fully authorized to execute a task from start to finish, including the legal and accountability handoffs, it remains a “sidecar” tool. By definition, a tool without authority cannot drive a business process. It can only suggest one.

Take as a case in point the February 2026 launch of Rentahuman.ai. The premise was deliberately provocative. AI Agents (which are by definition confined to data centers) would pay humans for physical labor that the AI could not accomplish without physical assistance. While the service is interesting, it reveals a deep problem. If a hired human is injured or arrested in the act of performing an AI assigned task, who is responsible? The platform treats the assignment as an open market transaction rather than an employment relationship. This steps around every protection that employment law provides.

(Relevant article: Rentahuman.ai Is a Stunt, but the Architecture Is Real.)

Starting an AI project with the assumption that the solution will have autonomy or authority that it does not possess is a recipe for conflict and disillusionment.

Verification Cost

AI will make mistakes. That is a given. So, whenever AI is deployed, we must ask about the cost of verifying what the AI says. For obvious reasons, the verification requirements are correlated with the implications of an error. If the cost of an error is low, then verification requirements are low. If the cost of an error is high, however, then verification is essential.

Companies who focus on how fast AI can generate work, but ignore how long it takes to verify it, will rarely succeed with production level services. If it takes 10 minutes to write a report but 15 minutes to fact-check it, the “gold” has evaporated.

Instead of streamlining their hiring, Amazon’s experimental automated screening tool became a cautionary tale in “unverifiable” logic. The algorithm, trained on a decade of resumes submitted primarily by men, taught itself to penalize any CV containing the word “women’s” (such as “women’s chess club captain”) and even downgraded graduates from two specific all-women’s colleges.

Because the system used thousands of nuanced data points to build its “ideal” candidate profile, the cost of verifying its neutral stance became prohibitive. Human recruiters couldn’t simply “check” the machine’s work without re-evaluating every single applicant from scratch—nullifying the efficiency gains the AI promised. Ultimately, the high cost of manual verification and the impossibility of purging the machine’s deep-seated gender bias led Amazon to scrap the project entirely in 2018.

Beware Fool’s Gold

Iron pyrite (FeS2) is frequently called “Fool’s Gold.” It looks like gold, but at the chemical level is nothing of the sort. Real gold (Au) is malleable; you can hammer it into sheets or pull it into wires without it breaking. Iron pyrite, on the other hand, is brittle. If you strike it, it shatters into jagged, useless shards. AI pilots are similar to Fool’s Gold. They look like gold until they hit the real world. Because they lack the authenticity and authority of real expertise they shatter when faced with complex human nuances. Successful AI projects are not brittle; they are truly malleable — they can bend, sometimes dramatically, without breaking.

By definition, iron pyrite is one part iron (Fe) and two parts sulfur (S2). As a result, when you strike it with a hammer it stinks like rotten eggs. It is pungent and unmistakable. Similarly, if an AI pilot stinks when struck by regulation, liability, or other constraints then it is not real gold.

(Relevant article: The AI Liability Squeeze.)

Fool’s Gold is significantly harder and more abrasive than the real thing. Miners in the 19th century who mistook pyrite for gold often ruined their tools trying to process it. In a similar way, “AI Theater” is abrasive to your organization. It wears down your most talented people by forcing them to compensate for failing AI. Real gold moves smoothly through the organization without wearing out staff or stinking up boardrooms.

Finally, a prospector in the 1850s could perform an assay test, called the “streak test,” to separate FeS2 from Au. Just scrape the mineral across an unglazed porcelain plate. Real gold leaves a yellow streak, while pyrite leaves a greenish-black streak. In AI an assay test is similar. Rub your AI up against the plate of profit and loss. If AI does not move the needle of P&L then it’s probably just theater.

In 1850, the difference between a millionaire and a bankrupt prospector was the ability to distinguish Au from FeS2. In 2026, the difference between an AI-forward enterprise and a “theater” organization is the ability to distinguish value capture from mere activity. Don’t be fooled by the glitter of a high-engagement pilot; if it doesn’t pass the streak test of the P&L, you’re just hauling rocks.

How to Actually Mine for AI Gold

When steam heats to 300°C–500°C it can melt gold and carry the liquid through fissures in rock. Eventually the gold cools and settles in fissures, while the water vapor escapes. The process leaves gold deposited in veins. This naturally occurring hydrothermal process concentrates gold, as well as many other minerals, in seams. This geological reality was foundational to the Gold Rush of the 19th century and it is analogous to the AI Gold Rush today.

In 1856, the lone prospector failed because they lacked the industrial equipment to go deep. Today, enterprises fail because they are “panning” for efficiency in workflows that are already dry. To move into the top 5% of winners, there are four guidelines that all business leaders should follow when searching for AI Gold.

The Strategy of the Seam (Where to Mine)

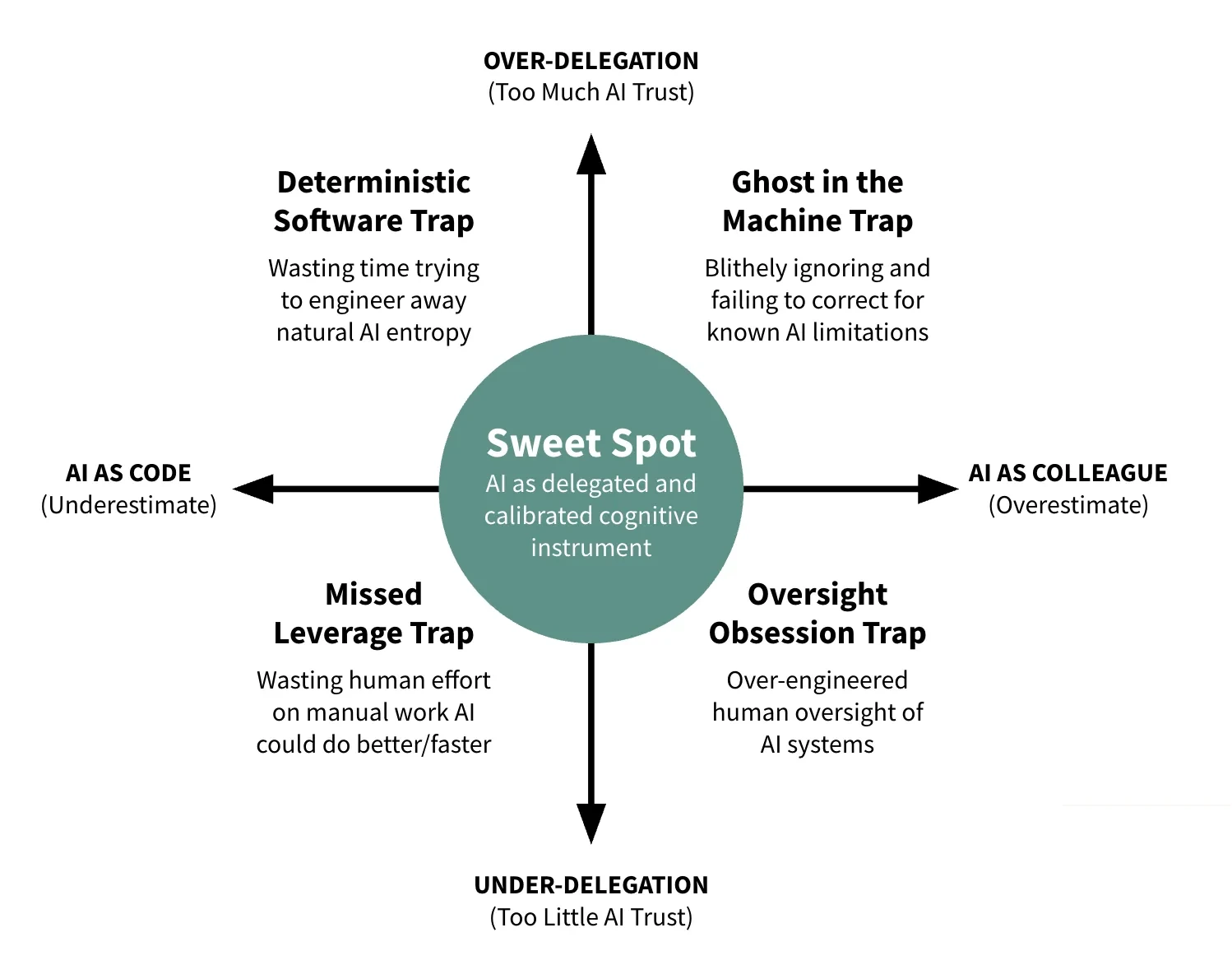

Obviously digging will not create value if there is no gold to be found. Successful projects start by identifying the vein, the seam where value concentrates. With AI the seam is the boundary where a machine’s probabilistic output meets a human’s deterministic authority.

AI models (LLMs) are built on probability. When you ask an AI to perform a task it is not “calculating” a correct answer in the way a spreadsheet does. Instead, it is predicting the most likely sequence of information based on patterns. AI output is always a high-probability guess. Even if it is 99% certain, that 1% “hallucination” is baked into the math.

The probabilistic system has no concept of “truth/error” or “true/false,” only “most likely/least likely.” A corporation, in contrast, is a deterministic system. It operates on binary “yes/no” or “legal/illegal” foundations. When a company signs a contract, pays an invoice, or diagnoses a patient, there is no room for a “high-probability guess.” You cannot “probably” pay an employee or “mostly” follow a safety regulation. These actions require authority, which is the power to make a final, binding decision.

Humans, not LLMs, are held liable in a court of law or lose their job for a bad decision. A machine cannot own the consequences of its actions. So, if you treat a probabilistic guess as a deterministic fact without the “seam” of human authority, you create the Authority Trap.

Business leaders should architect their workflows so the machine stays in the probabilistic zone (generating, sorting, suggesting) and only crosses the seam into the deterministic zone (deciding, signing, committing) when a human validates the output.

By finding the seam, you allow the AI to process two tons of rock (probability) so the human only has to verify the one ounce of gold (the decision).

Governance as Infrastructure

California became the 31st state on September 9, 1850. So, in the early stage of the gold rush (1848–50) there was effectively no formal mining law or state apparatus in the gold fields. Miners who traveled to the territory found themselves in a high-value, high-uncertainty environment with valuable “claims,” unclear ownership boundaries, and no trusted framework for enforcement.

Many organizations today find themselves in a similar “pre-law” phase around AI data and model use. AI systems are powerful and valuable, but existing IP and trade secret frameworks feel poorly mapped to how models train on, store, and regenerate information. In that vacuum, a significant share of companies have responded by outright banning or sharply restricting employee use of generative AI over fear that confidential data will leak, that training providers will appropriate trade secrets, or that AI outputs will create ownership and infringement problems. A 2024 survey by Cisco revealed that 27% of companies had banned the use of AI and 69% of companies believed AI could hurt their rights to intellectual property. (endnote #3)

Unlike what is depicted in American folklore and the entertainment industry, most miners in the 19th century did not want lawlessness or vigilantism. To the contrary, faced with legal vacuums, miners readily organized into “mining districts,” drafted local codes, and agreed on rules defining how to establish and maintain a claim.

Those district codes typically did three critical things: first, they tied title to discovery; second, they limited the size and duration of claims; and third, they required ongoing “development” work for a claim to remain valid. Over time, a patchwork of local rules influenced national law, culminating in the General Mining Act of 1872.

Current business leaders can take a page from the gold prospectors in the 1800s. When leading people through uncharted territories of AI, leaders should not assume their staff will automatically pursue a path of lawlessness. Most people welcome clear boundaries and enforcement policies. People inside organizations are willing to pan for gold but they don’t want to be punished by other prospectors or overseers for doing so.

Corporate governance for AI is typically steeped with defensiveness. One AI policy sent by a CEO in February 2026 said, “AI will never be used to record, transcribe or capture content within a group meeting.” Of course leaders must urge caution but they should not be stifling to AI experimentation either.

The best AI governance standards set guidelines for use, but they also encourage experimentation. They reward discovery and encourage collaboration.

Contrary to what many leaders believe, AI governance should be far more than a checklist of defensive rules. “Don’t do this with AI.” “Don’t do that with AI.” Yes, AI governance includes guidelines and structural support that prevents the mine from collapsing, but well-structured governance is much more. As a few examples, AI governance should include authority mapping, which is an explicit definition of what AI is not allowed to decide. If an AI cannot sign a contract, the workflow must be built with a human-in-command checkpoint before the signature. A task that is 90% automated but 100% ungoverned is a liability, not an asset.

AI governance also includes traceability of origin: in 2026, every piece of “AI gold” (data/output) must have a digital receipt. You must know which model produced it, which data trained it, and which human verified it.

The Unit Cost Mandate (The P&L Test)

In the Gold Rush, enthusiasm did not equal profitability. A miner could work 14 hours a day, pull gravel from the river, and still go bankrupt. The only test that mattered was yield per ton. If two tons of rock produced less than an ounce of gold, the operation would fail.

AI projects are no different. If an AI initiative does not reduce unit cost or increase revenue per employee, it is not transformation. It is theater. Leaders must insist on measuring the fully loaded cost of AI, including compute, licenses, and most critically, the coordination tax. If humans spend more time supervising outputs than they would performing the work directly, the economics collapse.

However, finding the gold is only half the battle; the true “industrialist” knows what to do with it. This requires a reinvestment pact. In 1850, the miners who merely spent their gold on higher-priced eggs remained prospectors. Those who reinvested their yields into better machinery, land rights, and infrastructure became the barons of the new economy. Similarly, the efficiency gains from AI must not simply sit as “dormant capital” on the balance sheet. To avoid the risk of de-skilling (where a team’s expertise atrophies because the machine is doing the heavy lifting) leaders must proactively reinvest “saved time” into higher-order human capabilities: innovation, complex problem-solving, and deep client relationships.

(Relevant article: AI Is Redefining Expertise.)

The P&L test is the modern streak test. Admittedly it is far more difficult to test an AI project’s profitability than it is to rub a stone across a plate, but testing AI against reality is no less important. Does cost per transaction decline? Does throughput increase without a proportional increase in oversight? And most importantly, is that captured capacity being redirected toward the next “vein” of value? If you find efficiency but fail to reinvest it, your organization isn’t growing; it’s just getting smaller, faster.

In 1852, miners who ignored yield per ton ran out of cash. In 2026, enterprises that ignore the unit economics—and the necessity of reinvesting those gains—will run out of relevance.

Industrialized Verification (The Refinery)

Gold pulled from the earth is not ready for market. It must be crushed, washed, smelted, and refined. In the 19th century, the refinery, not the shovel, determined the ultimate profitability of the claim.

Enterprises fail with AI when they generate faster than they can refine. In fact, verification cost is the ultimate binding constraint of the AI era. If the time and capital required to fact-check, audit, and secure an AI’s output exceeds the cost of simply doing the work manually, your “gold mine” is a money pit. To strike real wealth, you must make verification cheaper than the work itself.

Verification must be industrialized. This begins with verification cascades. Instead of a human reading every line, smaller, specialized auditor models review the output of primary generator models. These auditors check structure, citations, consistency, and rule compliance before a human ever touches the file. Properly designed, 80–90% of routine verification can be automated, collapsing the cost of quality control.

Next comes human exception management. This breaks the cycle of the phantom authority, where bored humans rubber-stamp every output until a catastrophe occurs. Instead, the cascade flags only high-risk exceptions for human inspection. Human attention shifts from passive, low-value monitoring to active, high-stakes judgment.

Finally, truth loop integration closes the system. Every verified error is fed back into the prompt library, retrieval layer, or policy constraints. The mine improves each time it encounters rock. In this model, error is not hidden or feared; it is harvested as a training signal to further lower the cost of the next ton of ore.

In the 1850s, raw ore was worthless without refining capacity. Today, raw AI output is equally crude. Competitive advantage belongs to organizations that build refineries, not just generators.

Conclusion

The California Gold Rush separated dreamers from industrialists. Surface gold rewarded speed. Deep gold rewarded systems.

The same pattern is unfolding in AI.

Most organizations are still panning for flakes. They launch pilots, celebrate engagement metrics, and mistake activity for value. But AI success requires more than access to powerful models. It requires discipline.

Find the seam where probabilistic output meets deterministic authority. Build governance as infrastructure so the mine does not collapse. Enforce the unit cost mandate so every initiative passes the P&L streak test. And above all, construct industrialized verification so one human can confidently manage the work of one hundred agents.

At Seampoint, we do not sell shovels. We design industrial mining rigs.

The difference between AI theater and AI gold is not intelligence. It is architecture.

In 1850, the winners were those who could tell Au from FeS2. In 2026, the winners will be those who can tell signal from glitter, yield from noise, and value capture from spectacle.

The gold is there. But only for those willing to mine it properly.

Endnotes

- MIT: “95% of enterprise GenAI pilots show zero measurable financial return.” https://www.legal.io/articles/5719519/MIT-Report-Finds-95-of-AI-Pilots-Fail-to-Deliver-ROI-Exposing-GenAI-Divide

- PwC: “56% of CEOs say AI has not yet increased revenue or reduced costs.” https://futurism.com/artificial-intelligence/ceos-ai-returns

- Cisco survey on banning generative AI and privacy risks: https://www.infosecurity-magazine.com/news/banning-generative-ai-privacy-risks/

More to read

The Great Refactor

Since late 2022, AI has been rewriting the cognitive code of work. This is not automation—it is a structural refactoring of how knowledge work gets done.

We've Crossed the AGI Humility Threshold

The AI industry’s tone has shifted from AGI hype to pragmatic adoption. What changed, why it matters, and what business leaders should do now.

Will AI Oversight Be the New Email Inbox Burnout?

Email promised to save time but created new burdens. AI oversight risks the same pattern unless leaders design verification into their workflows.

Where does AI belong in your processes?

Let us map the AI opportunities in your organization today.

Start a conversation