The Hard Lessons of AI in the Call Center

Jeff Whatcott · February 20, 2026

Every large company is circling the same question right now: How quickly can we replace our call center agents with AI? They see the demos, read the headlines about “digital workers,” and start doing the math on headcount reduction. Chatbots are getting better. Labor costs keep climbing. Plug in the AI, unplug the humans, watch the margins expand.

But people who’ve actually built these systems at scale describe something different. In a recent conversation on the Kleiner Perkins GRIT podcast, Ping Wu — who spent fourteen years at Google, co-founded Google’s Contact Center AI platform, and now runs Cresta, whose AI platform is deployed across all 9,000 agents at United Airlines — put it bluntly: “I do not see automation and the call center as a separate thing, but rather one system.”

That distinction matters more than any model capability. Organizations that treat AI as a replacement for humans will spend millions automating their own dysfunction. Organizations that treat AI and humans as one integrated system — where each handles the work it’s structurally best at — will capture enormous value. And the design logic that makes this work extends far beyond call centers to every process where AI and human effort intersect.

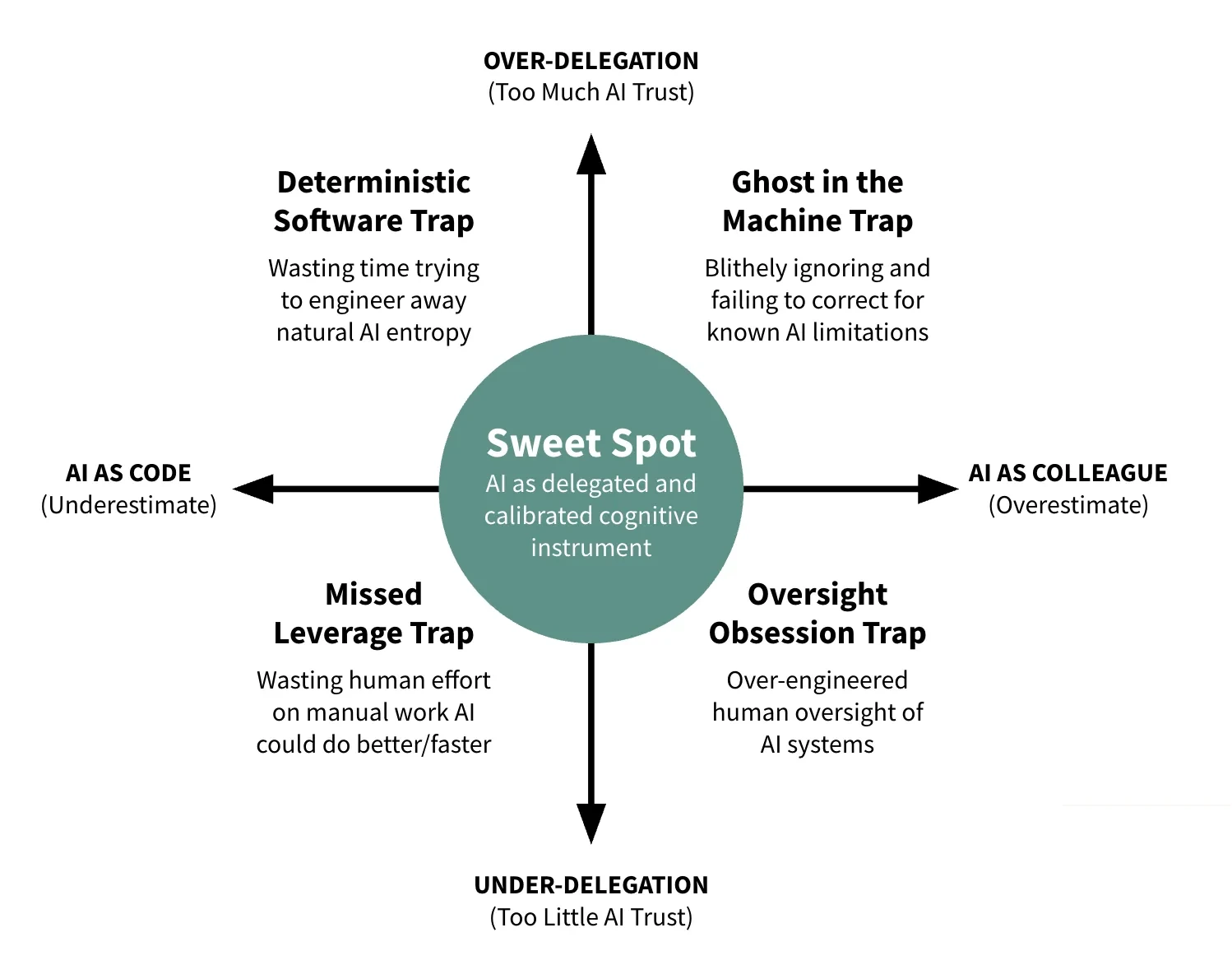

The most common mistake starts with a reasonable-sounding premise: we have thousands of interactions per month, AI can handle conversations, therefore we should automate as many as possible. The problem is that this treats the boundary between AI work and human work as an obstacle — how do we push more volume to the bot? — when it’s actually a design surface. The organizations getting this wrong aren’t failing at technology. They’re failing at boundary design.

Wu breaks this down with a clarity that most vendor pitches obscure. Customer interactions fall into three fundamentally different categories, and each demands a different AI strategy. Collapsing them into a single automation metric is where the damage starts.

The first category is interactions that shouldn’t exist at all. They happen because a billing statement or form is confusing, a product has a known defect, or a process generates unnecessary friction. United Airlines discovered this through their “insight to action” team — a group that uses Cresta’s platform to surface *why* people call in the first place. When the root cause is a failed process upstream, the right response isn’t a better bot. It’s fixing the process so the call never happens.

Consider the math. If a confusing billing statement is driving thousands of calls per month, that’s a product design failure — not a chatbot opportunity. AI helped you diagnose it. Fix the statement, and those calls disappear. Not because you handled them faster, but because you eliminated the reason they existed. No automation investment required. No ongoing bot maintenance. The return is pure operational improvement, and it compounds: every eliminated failure point reduces volume permanently.

The second category is interactions where nobody wants a conversation. Password resets. Package tracking. Flight seat changes. As Wu puts it, “neither party want to talk to each other.” Automate these completely. The consequence of error is low, the task is straightforward, and speed is the only metric that matters. Customers prefer instant resolution to waiting on hold. Agents prefer handling work that actually requires their attention. Full automation here isn’t controversial — it’s what both sides want.

The third category is where the replacement logic breaks down entirely. Your house flooded and you’re calling your insurance company. Your healthcare claim was denied and you don’t understand why. Your flight was canceled and you’re stranded with two kids in an unfamiliar city. In these moments, an automated response isn’t efficient — it’s insulting. The customer needs to feel heard by another human being. There is genuine business value in that connection: loyalty, retention, brand perception that no containment metric captures.

For this category, the right AI strategy is augmentation — giving human agents capabilities they couldn’t have alone. Surfacing the right policy document instantly instead of making the agent search through a knowledge base while the customer waits. Pre-filling forms so three minutes of data entry becomes three seconds. Summarizing a complex case history so the agent walks into the conversation already understanding what happened and what’s been tried. The human focuses entirely on empathy and judgment. The AI handles everything that competes for that attention.

These three categories — eliminate, automate, augment — are structurally different problems requiring structurally different solutions. They are not a spectrum. Organizations that ask “how much can we automate?” collapse all three into a single metric: containment rate. The higher the percentage of interactions handled without a human, the better. This occasionally produces impressive pilot numbers. It also produces the disasters you read about — UnitedHealth’s automated claims denial optimizing for rejection rates, Air Canada’s chatbot inventing refund policies that a court held the airline liable for.

Organizations that ask “which category does this interaction fall into?” design boundaries where AI and humans each do what they’re best at. The first question optimizes for cost. The second optimizes for outcomes. The difference shows up in whether your AI investment creates sustainable value or generates lawsuits.

So why haven’t more organizations made this shift? The comfortable explanation is that models aren’t good enough yet. Wu points to a more consequential bottleneck: “Most of the Fortune 500, agents have to deal with multiple systems, sometimes nine to 10 different systems. A lot of those systems are homegrown, do not have APIs.”

You can deploy the most capable frontier model available, but if your claims processing system is a 30-year-old mainframe that agents interact with through a graphical interface designed for humans, the AI has no hands. It can reason brilliantly and act on nothing. Wu contrasts this with a simple e-commerce operation built on Shopify, where everything runs through modern APIs. “We can probably automate 100%, to be very frank.”

The implication is significant and under-appreciated: every system you expose through an API creates a new design surface where you can architect how automated and human work combine. Infrastructure doesn’t just determine *how much* you can automate — it determines *what kinds of boundaries you can design*. An organization with clean APIs between its order, payment, and fulfillment systems can design a boundary where AI handles the entire transaction flow while humans focus exclusively on exception resolution. An organization where those systems don’t talk to each other can’t design that boundary at all, regardless of how capable the model is.

Infrastructure determines what boundaries are possible. Model capability determines how well you execute within them. Most organizations are investing heavily in the second while ignoring the first. They buy the best model and point it at a system it can’t touch.

This matters enormously for mid-market organizations, and the news is mostly good. You probably have legacy systems, but almost certainly fewer than a Fortune 500 company with nine or ten siloed platforms accumulated over decades of acquisitions. Your modernization decisions don’t require eighteen months of procurement review. A mid-market company can decide in January to expose its order management system through a structured API and have it operational by March. Every process you move from screen-scraping to structured data becomes a candidate for intelligent boundary design.

The organizations capturing value fastest aren’t the ones with the best models. They’re the ones with the cleanest infrastructure. At mid-market scale, getting to clean infrastructure is a months-long project, not a years-long one. That’s a genuine competitive advantage over enterprises paralyzed by their own complexity.

There’s a transformation hiding inside Wu’s framework that deserves more attention than front-line automation: what happens to management when AI can observe everything.

In a traditional contact center, QA teams manually review 1-2% of interactions. At enterprise scale, hundreds of people may be dedicated to this function, and they’re still flying blind on 98% of what’s happening. A manager might spend Tuesday morning listening to six recorded calls from the previous week, score them on a rubric, and file the results. By the time a pattern shows up — an agent consistently mishandling a particular policy, a new product generating widespread confusion — weeks have passed. The damage is done. The coaching opportunity is stale.

When AI monitors every interaction in real time, the math changes structurally. A manager becomes something like an air traffic controller — scanning a dashboard of live interactions, receiving alerts when the system flags a critical situation, arriving early enough to change outcomes rather than document failures after the fact.

This amounts to a redesign of what the management system is. The old system was designed around human limitations — hence the 1-2% sampling rate. The new system should be designed around what humans are actually best at: coaching, judgment, and intervention at critical moments. Some management tasks are diagnostic — identifying what’s going wrong across thousands of interactions. Some are transactional — logging scores, documenting reviews, generating compliance reports. Some require human judgment that no model can replicate: coaching an agent through a difficult customer interaction in real time, making a policy exception for an unusual situation, recognizing that a high performer is burning out before they quit. AI is structurally better at the first two categories. Humans are structurally better at the third. Design the boundary between them.

For mid-market operators, this shift matters more than automating front-line interactions. You don’t have hundreds of dedicated QA staff. You have managers wearing multiple hats — handling escalations, coaching agents, reviewing calls when they find time, running reports nobody reads. The QA function doesn’t get a dedicated team; it gets whatever attention is left over after everything urgent gets handled.

When AI handles continuous monitoring and surfaces only the interactions that need human attention, those managers get their real jobs back. They can coach instead of hunting for problems. They can spot a struggling agent before performance craters. They can focus on the new hire who needs guidance rather than retrospectively scoring last week’s calls. In a labor market where hiring and training contact center agents is already expensive, the retention impact alone changes the economics. Keeping one good agent who would have quit because nobody noticed they were struggling is worth more than automating fifty password resets.

The deepest insight in Wu’s operating model is what happens when you treat augmentation as a diagnostic instrument rather than a transitional phase.

When you deploy AI alongside human agents, you can observe something you’d otherwise never see: where AI fails and how humans resolve those same situations. That human performance data becomes training signal for improving the next version of the AI. Wu describes this explicitly — the same Cresta platform that assists human agents also powers autonomous agents, and the data flows between both. Human interactions reveal which conversation flows are ready for automation, how to stress-test AI agents with realistic scenarios, and where current models fall short in ways that matter.

This creates a compounding advantage that pure-replacement strategies can never access. If you replace all your agents with bots on day one, you lose the human performance data that tells you where the bots are failing. You discover the failures through customer complaints, social media eruptions, and churn. By then, the damage is real and the diagnosis is expensive.

Organizations that start with augmentation build an increasingly detailed map of their own operation. They learn which interactions genuinely require human judgment — not in theory, but from observing thousands of real conversations. They learn where their infrastructure supports full automation and where it doesn’t. They learn where current models fall short in ways specific to their customer base. That map improves continuously, because the boundary between automation and augmentation shifts as the system learns. What required a human last quarter may not require one next quarter, and the data will show you exactly when that threshold has been crossed.

The reason to start with augmentation is strategic. It generates ground truth about where your actual boundaries are. Without it, you’re designing boundaries from vendor demos and intuition. With it, you’re designing from evidence. The evidence keeps improving.

This is also why Wu pushes back on the question everyone asks: “How many humans will still remain?” The question assumes linear progression toward full automation — a steady march from 9,000 agents to 4,000 to zero. The better question is a design question: what’s the right allocation between elimination, automation, and augmentation for your specific operation, given your current infrastructure and customer base? That allocation evolves as your infrastructure improves, as models advance, and as the feedback loop between human and AI performance generates better data about where each belongs.

Wu identifies three dimensions that determine how far any organization can push automation: conversation complexity, IT infrastructure readiness, and customer demographics. Most organizations fixate on the first and ignore the other two.

If your conversations are moderately complex but your infrastructure is modern and your customers are digitally comfortable, you can move aggressively on automation. If your conversations are simple but your infrastructure is a patchwork of systems without APIs, your first investment should be integration, not AI. If your customer base skews older and values human interaction, augmentation will deliver more value than automation regardless of how capable the models become.

This diagnostic framework aligns with what we found when we analyzed nearly 19,000 work tasks across 148 million U.S. workers: the limiting factor on AI deployment is rarely model capability. It’s the governance and infrastructure constraints that determine where organizations can safely and sustainably deploy.

Most organizations discover they’ve been investing in the wrong dimension — automating conversations when their real constraint is infrastructure, or chasing modernization when their customer base actually wants human interaction. Correct diagnosis precedes correct investment.

Wu’s company reflects the discipline his framework demands. Cresta’s operating principle used to be “stay lean.” They crossed out “stay.” Just “lean.” The number of tools you’ve acquired doesn’t matter. What matters is whether each investment does the right kind of work in the right category.

That three-part question — eliminate, automate, or augment? — applies to every business process where AI and human work intersect. Customer service, claims processing, compliance review, financial analysis, legal research. The categories don’t change. The boundaries do, and they’re specific to your operation.

Which of your interactions shouldn’t exist at all? Which ones does nobody actually want? Where does human judgment create value that no model will replicate? And what does your infrastructure actually support today — not what a vendor demo suggested was possible?

Getting those answers right matters more than getting the technology right. The best AI deployed against the wrong category doesn’t just fail to help. It actively damages the thing you’re trying to protect.

Ping Wu’s comments are drawn from his appearance on the GRIT podcast with Joubin Mirzadegan of Kleiner Perkins. The framework extensions — infrastructure as design surface, management transformation, augmentation as diagnostic instrument, and mid-market applications — are our analysis. Our research on work task automation across 19,000 tasks and 148 million U.S. workers is available at The Distillation of Work.

More to read

The Great Refactor

Since late 2022, AI has been rewriting the cognitive code of work. This is not automation—it is a structural refactoring of how knowledge work gets done.

Stop Panning, Start Mining: AI Discipline

The Gold Rush rewarded infrastructure builders, not prospectors. AI success demands the same industrial discipline over speculative experimentation.

AI 2026: Moving Beyond the Hype Cycle

The first wave of AI excitement is fading as organizations hit real limits. Why 2026 is the year leaders confront what AI can and cannot do.

Where does AI belong in your processes?

Let us map the AI opportunities in your organization today.

Start a conversation