The Amplification Mindset Changes What AI Is For

Jeff Whatcott · March 30, 2026

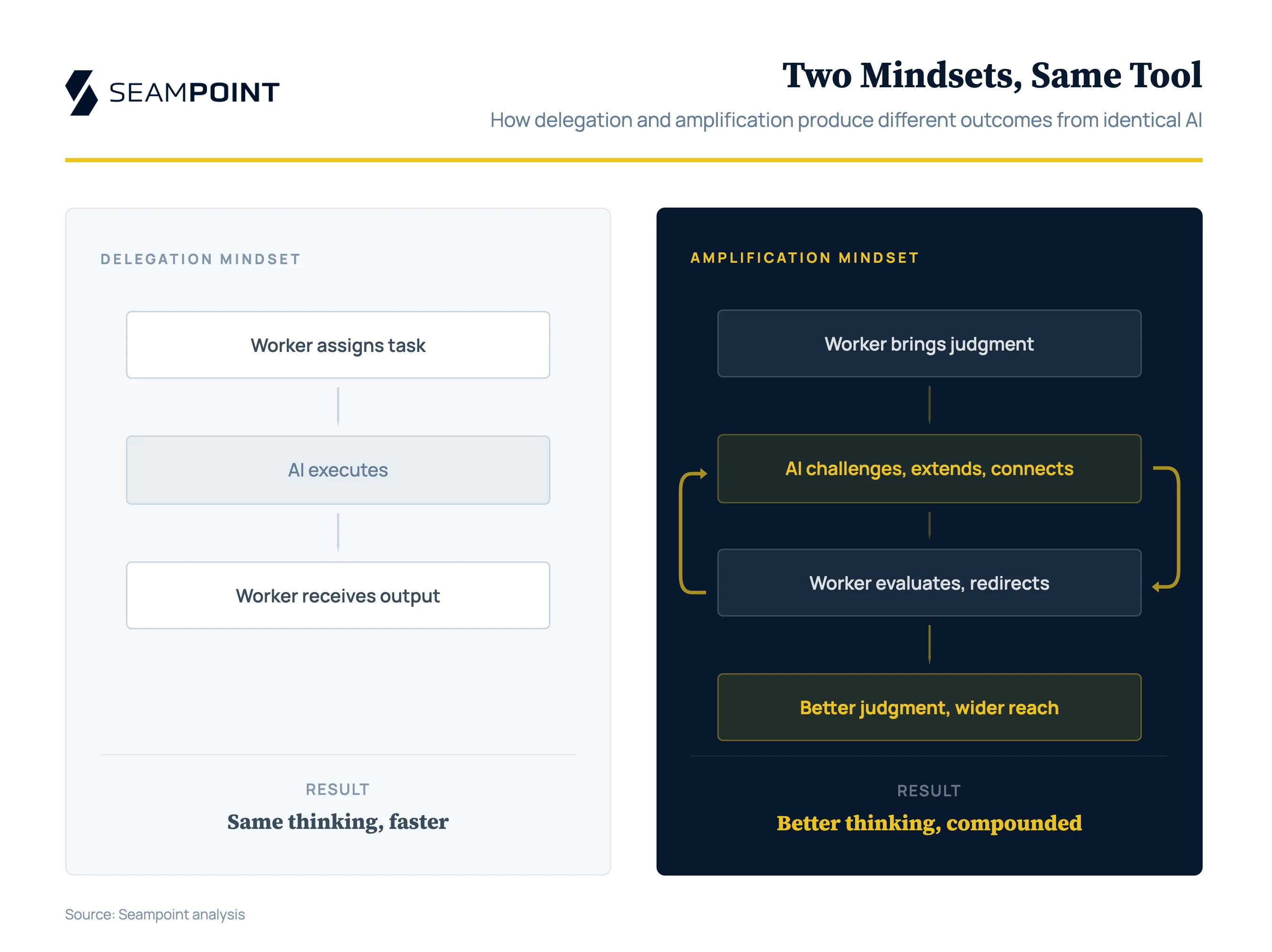

I keep hearing executives describe AI the same way: a very fast, very cheap junior employee. You hand the machine a task. The machine does the task. You receive the output. The interesting questions, in this framing, are all about trust, supervision, and credit. Did the human “really” do the work? Does she deserve praise for the output? Is she getting dumber because she’s no longer doing the task herself?

These are the wrong questions. They emerge from a model in which AI and the human are separate actors passing work products back and forth across a clean boundary. The human thinks, then hands off; the machine executes, then hands back. The philosophical literature on AI and human enhancement is almost entirely built on this model, and it leads reliably to two conclusions: either AI is a crutch that will atrophy our capabilities, or it’s a tool we can’t take credit for using. Neither conclusion is useful, because the model is incomplete.

Some tasks genuinely are delegation. The accounts payable clerk matching invoices to purchase orders is performing a high-volume reconciliation task that happens to be housed inside a human because, until recently, there was no alternative machine to do it. The customer support rep answering her 200th password-reset ticket this month is not sharpening her communication skills. She is absorbing repetitive friction that could be eliminated entirely. The marketing coordinator resizing ad creative for fifteen different platform specs is learning the specifications, not marketing. When AI takes over these tasks, the correct response is: good. Hand them off. They were never the real work.

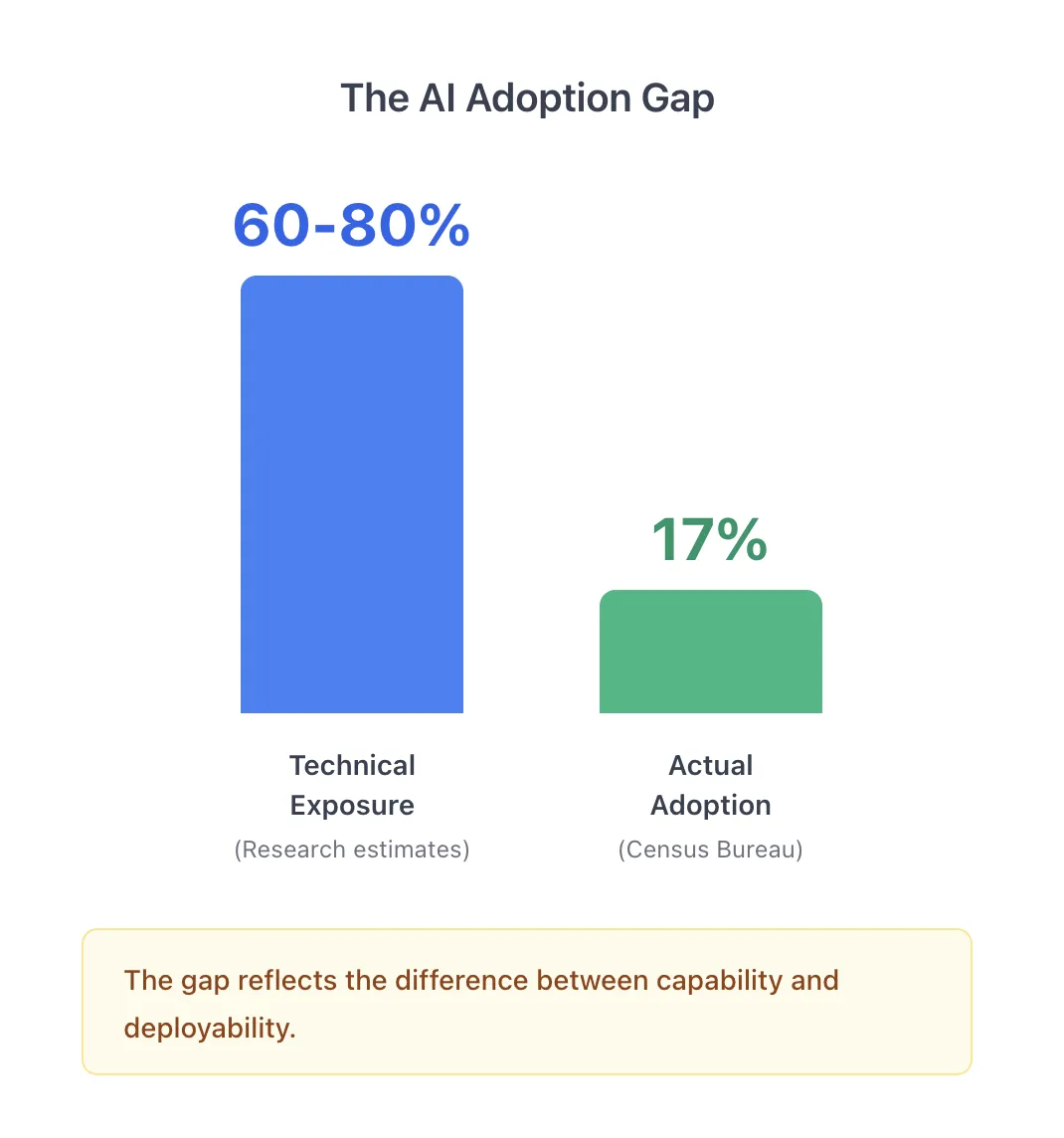

But delegation is the obvious move. Everyone sees it. The overlooked opportunity, the one that separates organizations that use AI well from those that merely use it, is amplification.

An amplifier increases the power of an input signal without changing its character. A microphone doesn’t replace the singer’s voice. It makes the voice carry farther, reach more people, fill a larger room. AI is a more creative amplifier than a Marshall stack — it generates possibilities, surfaces connections, pushes back. But the singer still has to be good. She has to recognize which of those possibilities are worth pursuing and which are confident nonsense. A bad voice amplified is just a louder bad voice. But a good voice amplified can do things that a good voice alone cannot.

This is what happens when a skilled professional uses AI well. The input is human judgment: domain knowledge, contextual awareness, ethical reasoning, the ability to read a room or sense when a client’s stated problem isn’t the real one. The AI amplifies the reach and speed of that judgment. A senior lawyer who understands how to frame a legal argument can now test that argument against dozens of jurisdictional variations in an afternoon. A strategist who sees a market pattern can now pressure-test it against data she could never have assembled manually. An engineer who understands system architecture can now implement and iterate on designs at a pace that would have required a team.

In none of these cases has the human delegated her judgment. She has extended its range. The quality of the output is bounded by the quality of the input. The AI multiplies the thinking’s consequences.

The question “does the person deserve credit?” is malformed. It treats the AI-assisted output as a product to be attributed, when it’s actually a performance to be evaluated. Can this person reliably produce good outcomes in this configuration? If yes, she’s capable. If no, the amplifier just made her limitations louder.

To see how far the delegation model leads serious thinkers astray, consider the philosopher Sven Nyholm’s 2023 paper, “Artificial Intelligence and Human Enhancement.” Nyholm examines the AlphaGo matches and asks whether the DeepMind employee who physically moved the stones on the board, carrying out AlphaGo’s recommended moves against world champion Lee Sedol, was “cognitively enhanced.” After careful analysis, he concludes that AI offers, at best, “artificial cognitive enhancements” that enable people to behave as if they have improved capabilities without truly possessing them. Real enhancement, in his framework, requires that the human demonstrate “special effort,” “particular talent,” or “significant sacrifice.”

The paper is philosophically careful. It is also a case study in what happens when you apply the delegation model to a phenomenon it cannot describe. Nyholm’s entire analysis depends on treating the human and the AI as separate entities whose contributions can be isolated and individually assessed. But this framing only makes sense if the relevant unit of analysis is the individual human, stripped of her tools. By that logic, the lawyer using Westlaw isn’t really doing legal research. The architect using CAD isn’t really designing buildings. The surgeon using a robotic system isn’t really operating. At some point, the insistence on evaluating the unassisted human becomes an exercise in measuring a capability that no longer exists in the wild.

The amplification model dissolves Nyholm’s question rather than answering it. The DeepMind employee moving stones was a mechanical relay. He’s not interesting as a case of enhancement. But a senior lawyer using AI to pressure-test arguments across jurisdictions is doing something that requires skill, judgment, and expertise. The amplifier framework distinguishes between these cases. The praiseworthiness framework cannot.

Consider two identical twins assigned the same research paper. One rides a bicycle to the library and arrives in five minutes. She goes directly to the librarian, explains what she’s researching, and is quickly connected with the authoritative sources on her subject. She photocopies the relevant pages, puts them in her backpack, and rides home. But she doesn’t start writing immediately. On the ride back, something the librarian said made her wonder whether her original research question was the right one. She sits down with the sources and asks herself: Am I framing this correctly? What if the more interesting question is adjacent to the one I started with? She spends twenty minutes reconsidering her angle before writing a word.

Her sister, meanwhile, is only now arriving at the library on foot. The walking twin skips the librarian and starts browsing the stacks, trying to remember how the books are organized. She eventually finds some promising material, makes her copies, and walks home. By the time she sits down to write, she’s tired and behind schedule. She writes the paper she planned to write, because there’s no time to reconsider.

When they hand in their papers, the cycling twin gets an A-minus and the walking twin gets a B. Does the cyclist deserve the better grade?

Nobody would argue that walking to the library developed superior research capabilities. Nobody would claim that wandering the stacks without expert guidance is a better methodology than consulting the librarian. The bicycle and the librarian eliminated friction between the student and the intellectual work. But notice what the cycling twin did with the reclaimed time: she thought better. She used the margin the bicycle created to challenge her own framing, something the walking twin never had the bandwidth to attempt. Her existing capability carried farther because she wasn’t burning half her time on logistics. She used that margin not just for more output, but for better judgment.

The cycling twin’s bicycle is pure delegation. Getting to the library faster is not intellectually interesting. But what she did with the reclaimed time is amplification, and that’s the part most people miss. The bicycle didn’t make her smarter. It gave her room to use the intelligence she already had.

Academic commentary on AI and human capability would struggle with this example. Frameworks that evaluate enhancement in terms of “effort,” “talent displayed,” or “praiseworthiness” would score the walking twin higher on process, even though her output and her learning were both worse. The framework rewards visible struggle as a proxy for genuine engagement, and in doing so, mistakes friction for pedagogy.

This is the deepest error in the delegation-only model: it assumes that the tasks AI displaces were developing capability. I’ve written before about the irreducible human capabilities that survive refactoring: metacognitive awareness, causal reasoning, social navigation, adaptive execution. Those capabilities were never built by the mechanical work AI is now absorbing. They were built despite it, in the margins, when professionals had enough slack to reflect on what they were actually learning. The amplification framing makes this explicit. When AI eliminates the mechanical layer, the question is whether we replace incidental training with intentional training, or whether we just declare the training opportunity lost and move on. The amplifier doesn’t just make the professional faster. It makes the professional’s development faster, by stripping away the noise that was never contributing to the signal.

Every knowledge worker spends fifty to sixty percent of her day on coordination work: preparing for meetings, synthesizing information for colleagues, drafting communications, following up on decisions, searching for documents, framing problems for others to act on. This work is sovereign. The worker decides how to do it. No formal process dictates it. No governance layer reviews it. And the worker’s mindset alone determines whether AI stays a delegation tool or becomes an amplification instrument.

A delegation-minded worker asks AI to summarize the quarterly data. An amplification-minded worker asks AI to find the pattern in the data that contradicts her current hypothesis. The delegation approach produces the same quality of thinking, faster. The amplification approach produces better thinking, by using AI as a cognitive sparring partner that has no attachment to prior conclusions, no defensiveness about last quarter’s strategy, no unconscious avoidance of uncomfortable possibilities. The AI hasn’t lived through the quarter. It reads the data without baggage. That’s a feature, not a bug, as long as the worker knows to exploit it.

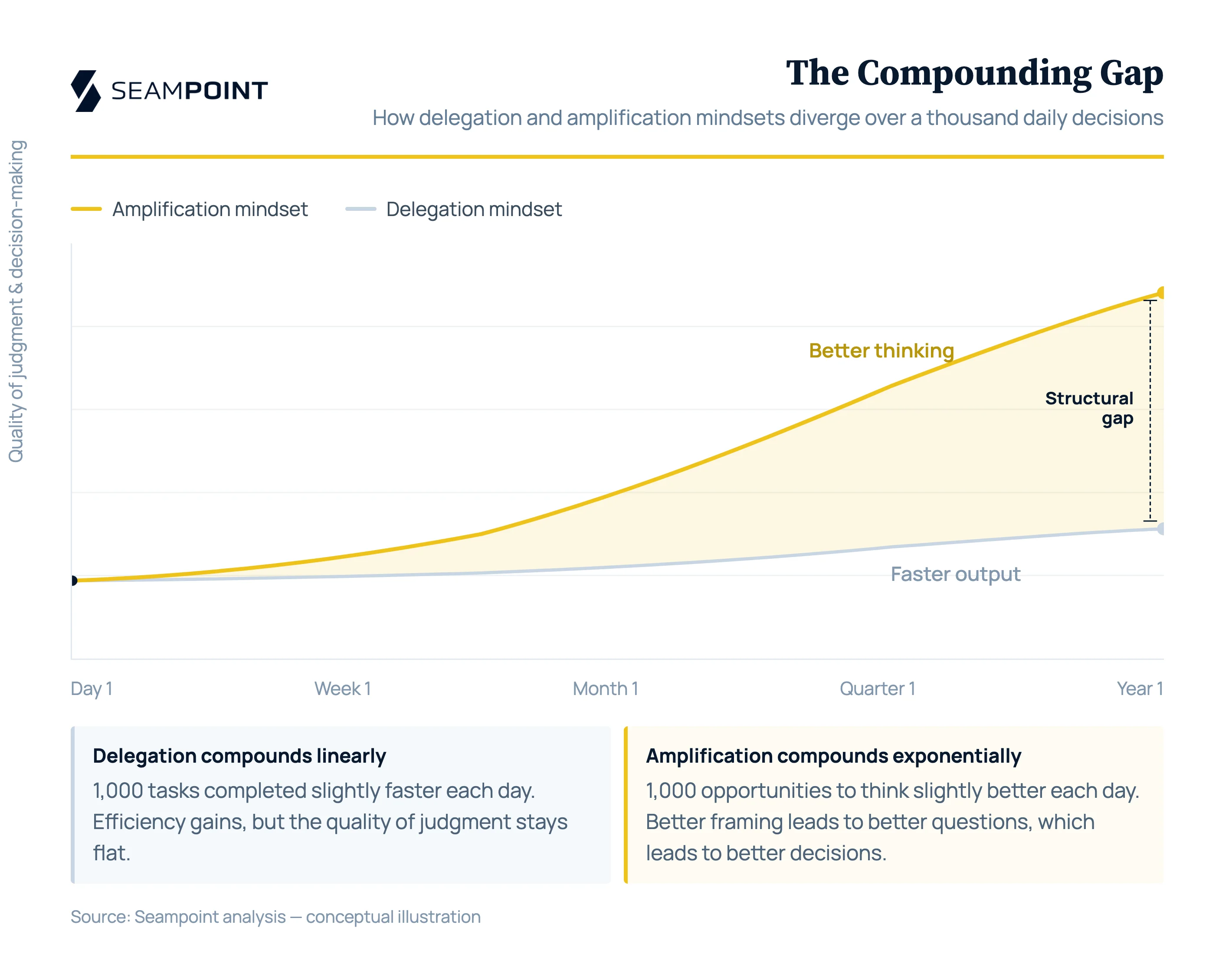

A worker makes hundreds of these micro-decisions daily: how to frame an email, how to prepare for a conversation, how to interpret ambiguous feedback, whether to raise an issue or let it slide. None of these decisions are individually high-stakes. But in aggregate, they determine the quality of the worker’s judgment, the clarity of the team’s communication, and the speed at which the organization surfaces and solves problems. A delegation mindset across a thousand daily decisions means a thousand tasks completed slightly faster. An amplification mindset means a thousand opportunities to think slightly better. Over a year, the gap between these two workers is not incremental. It is structural.

No governance layer exists to catch the missed upside. When core work is poorly designed, when AI is deployed without appropriate oversight in a high-stakes process, there are mechanisms to detect the failure. Bad outputs surface. Quality metrics flag problems. But when a worker fails to use AI as an amplifier for sovereign coordination work, nothing happens. No error occurs. The meeting just proceeds with an unexamined agenda. The email just goes out with the first framing that came to mind. The data summary just confirms what the worker already believed. The cost is invisible: opportunities for better thinking that were never pursued, because the worker’s mental model of AI stopped at “hand things off.”

None of this means amplification is automatic. Amplifiers are sensitive to input quality, and bad input amplified produces bad output at scale. A professional who lacks domain judgment and uses AI to generate work product she can’t evaluate is not being amplified. She’s being exposed. The AI will produce confident, plausible, wrong outputs, and she won’t catch them because she doesn’t know what right looks like. The real risk of AI in professional work is deployment ahead of sufficient skill development. The problem is putting someone behind the microphone who can’t sing.

If AI amplifies capability, then the returns on investing in foundational capability go up, not down. Every dollar spent developing a professional’s judgment, contextual awareness, and domain expertise now yields more output, more reach, more impact, because the amplifier is waiting. But the training itself needs to change. Training programs that teach workers to hand tasks off safely are building delegation competence when they should be building amplification instinct: the reflexive habit of using AI to challenge assumptions, explore adjacent possibilities, escape cognitive ruts, and arrive at better judgment than the worker would have reached alone. The skill floor for amplification is higher than the skill floor for delegation. But the ceiling is incomparably higher too.

The delegation model persists because it’s psychologically comfortable. If AI is a delegate, then the human is still the boss. She assigns work, reviews output, approves or rejects. The power relationship is clear. The amplification model is more demanding. If AI amplifies your capability, then your capability is on display. You can’t hide behind the process. You can’t point to the hours you spent walking to the library as evidence of your diligence. Your output either demonstrates judgment or it doesn’t, and the amplifier makes the difference between good judgment and bad judgment visible at a scale that was previously easy to obscure.

Nobody asks whether the singer “deserves credit” for the performance because she used a microphone. The microphone is part of the performance. The question is whether the performance is good. The professionals and organizations that internalize this will build training systems designed to maximize the quality of the human input. The ones that cling to delegation thinking will build oversight systems designed to minimize the risk of the machine’s output. Both matter. But only one of them develops human capability. The other manages machine liability.

Delegation will handle the invoice matching, the ticket routing, the status reporting, the format conversions. Those were logistical tasks dressed up as intellectual ones, and they persisted because there was no cheaper alternative. Now there is. But the larger question is whether we’ll use the reclaimed capacity for the work that actually requires human intelligence, or whether we’ll mistake the elimination of noise for the elimination of signal.

The amplifier is here. The question is whether you have something worth amplifying, and whether you’ll use it to think better or just to finish faster.

More to read

The Backstory Behind "The Distillation of Work"

Why we spent the holidays building a new way to measure AI's impact on work—and what we found mapping 18,898 tasks across 848 occupations.

The Knowledge Worker’s Last Refuge

AI is reshaping knowledge work, but some roles resist automation entirely. A dialogue reveals what judgment, accountability, and context AI can’t replace.

The Four Actors in Hybrid AI Architecture

A framework for hybrid AI architecture: how Humans, Hardware, Software, and AI Agents must each be assigned to the right tasks for AI to succeed.

Where does AI belong in your processes?

Let us map the AI opportunities in your organization today.

Start a conversation