The Backstory Behind "The Distillation of Work"

Jeff Whatcott · January 15, 2026

Last summer I spent months researching what differentiates humans from AI. What can people do that models consistently struggle with? Where does human judgment and embodied experience actually matter? The answers, gleaned from deep dives into the neuroscience literature, were more interesting than I expected.

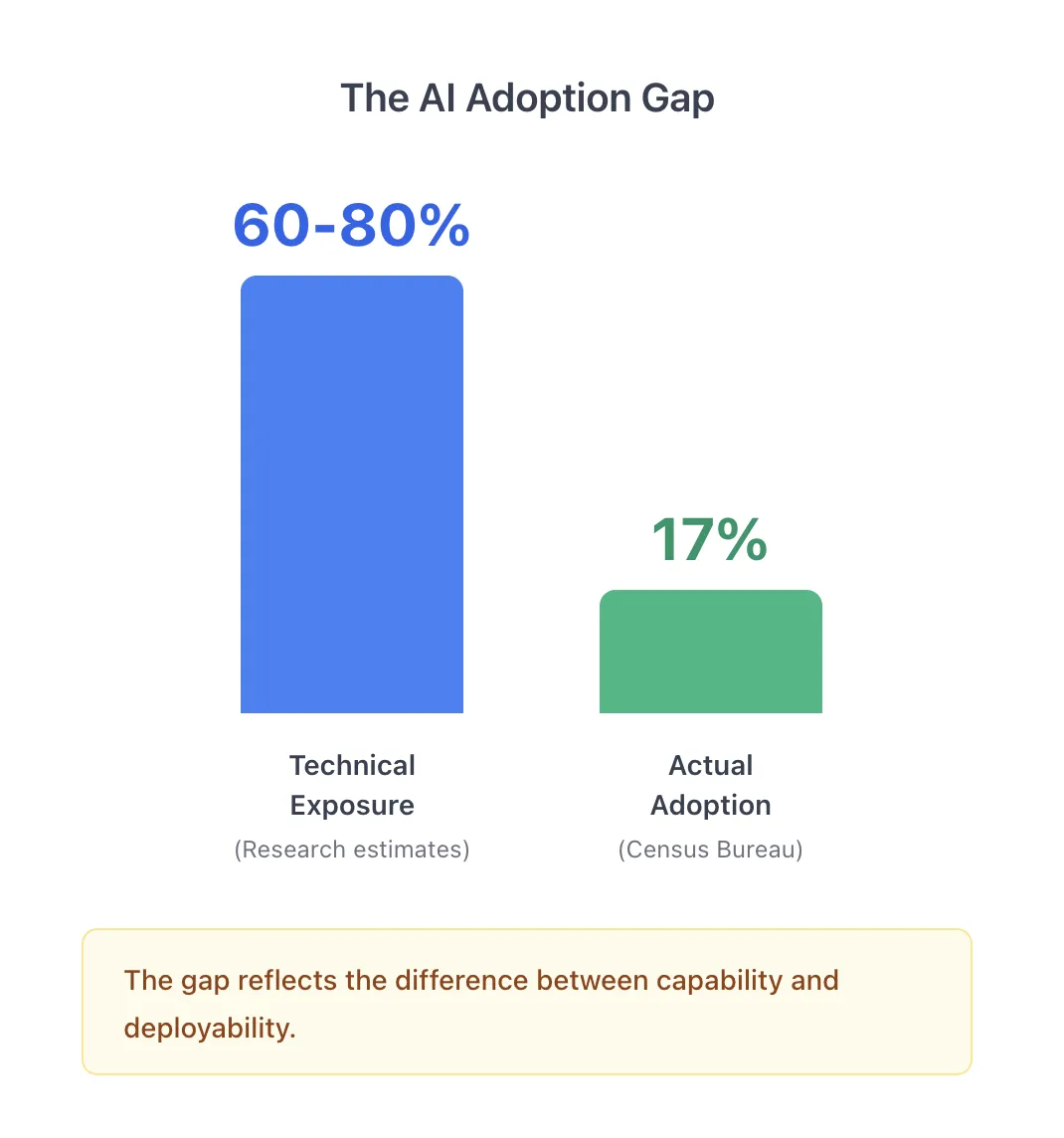

Then autumn arrived, and with it a tsunami of headlines about AI job displacement. I saw study after study finding that 60-80% of work tasks overlap with AI capabilities. I read countless predictions of massive workforce disruption. The tone was urgent, sometimes alarming. I’ve seen two new studies appear in the past 45 days.

But something about these studies and media narratives doesn’t fit. My research on human distinctiveness suggested AI would struggle with many tasks that are core to most jobs. The headlines said otherwise.

Then I started noticing something else: the cascade of high-profile AI deployment failures. Lawsuits. Retractions. And quietly, the rise of AI exclusions in professional liability insurance. Insurers were telling us something the research wasn’t.

That’s when it clicked. The research was asking what AI can theoretically do. Organizations were asking what AI can be trusted to do. Those are different questions. And the gap between them matters. A lot.

The Census Bureau reports that only 17% of U.S. businesses are using AI in any business function. Not 60-80%. Not even close. That gap, between what AI can do and what organizations really deploy, is where this project started.

Two Different Questions

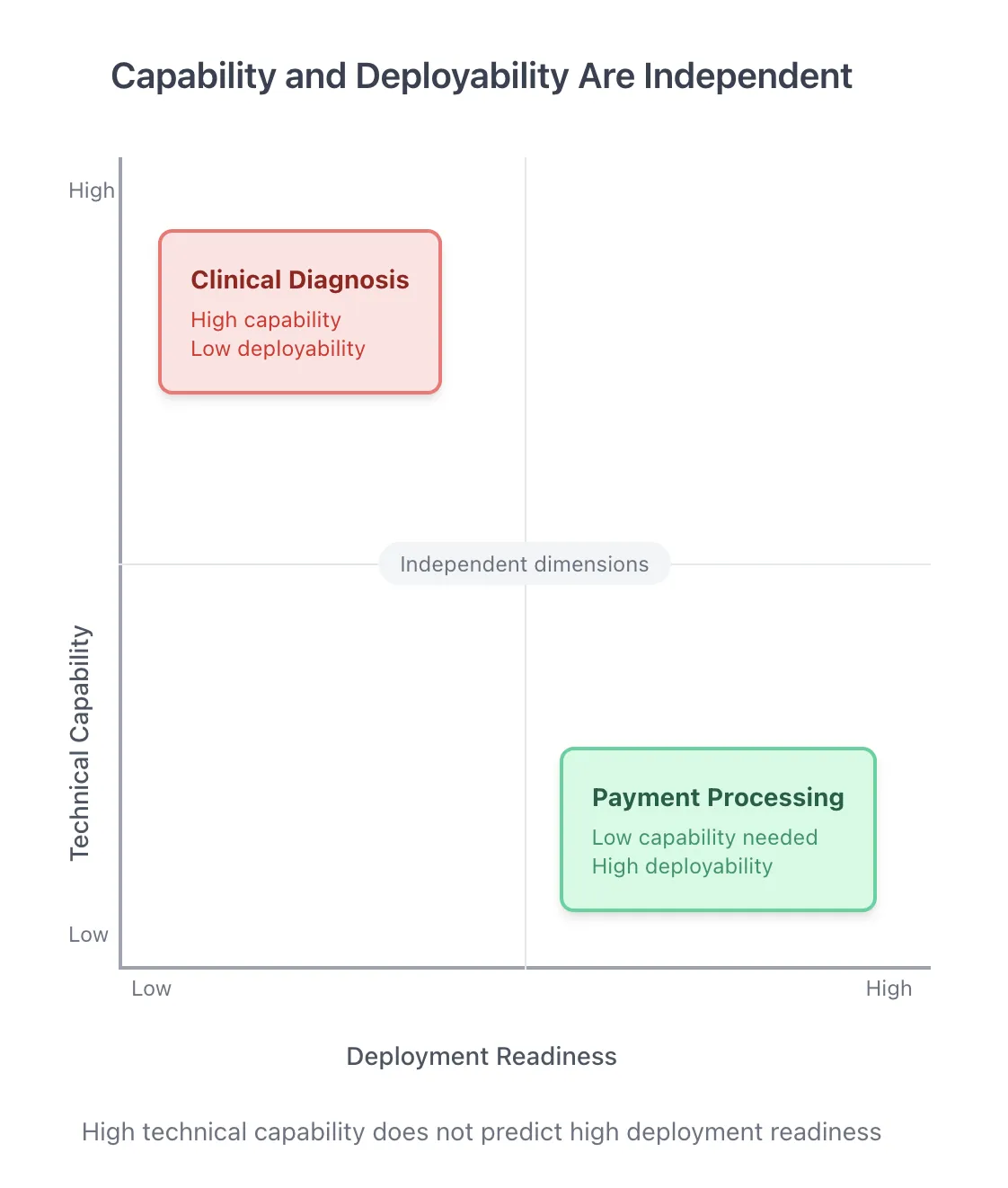

Technical capability asks whether an AI system can perform a task. Deployment readiness asks whether an organization can responsibly hand that task over, given liability structures, verification requirements, regulatory constraints, and the physical realities of the work.

These aren’t the same dimension. They’re not even correlated in predictable ways.

Consider clinical diagnosis. AI systems can match or exceed physician accuracy for many conditions. The technical capability is impressive. But malpractice liability doesn’t transfer to software. No hospital general counsel is signing off on autonomous AI diagnosis anytime soon, because the liability architecture normally doesn’t permit it. It’s worth noting that Utah’s AI Sandbox is experimenting with limited regulatory immunity for low-risk cases, like Doctronic’s AI prescription renewals, but these are deliberate carve-outs, not the general rule.

Now consider payment processing. It requires minimal intelligence. But verification is trivial (did the money move?), errors are usually reversible, and no one needs to authorize each transaction. Organizations automated this decades ago and keep pushing the boundaries.

High capability, low deployability. Low capability, high deployability. The two dimensions are independent.

What Stops Deployment

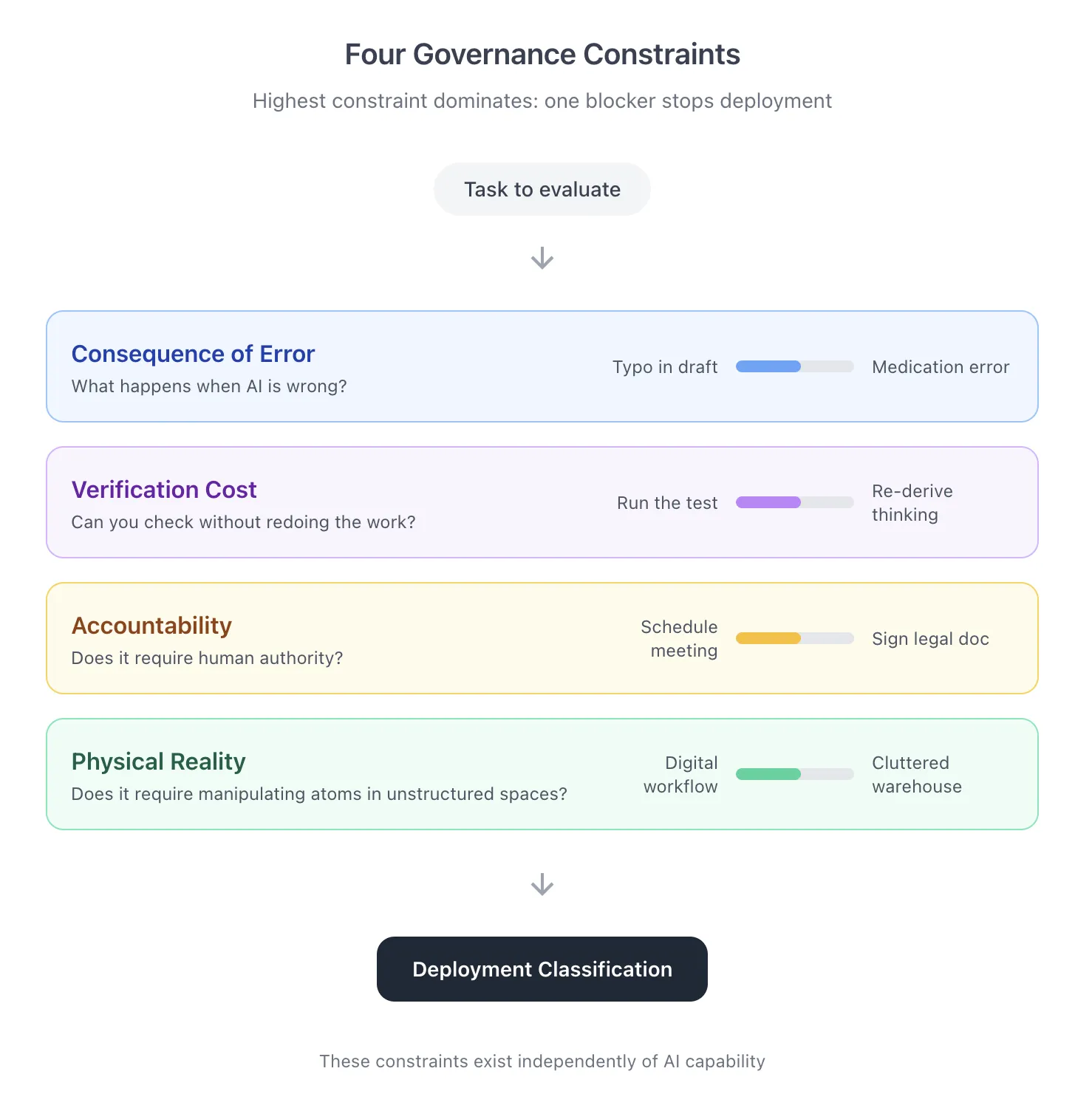

Once we started cataloging what prevented deployment in practice (in actual organizational decisions, not in theory), four patterns kept emerging:

Consequence of error. What happens when the AI is wrong? A typo in a draft email is annoying. A medication error is catastrophic. The stakes shape what organizations will delegate and how, if at all.

Verification cost. Can you check the output without redoing all the work? This turns out to be the sleeper constraint. If verifying the AI’s work costs as much as doing it yourself, the economic case for delegation collapses, no matter how capable the system.

Accountability requirements. Does someone need to authorize this? Sign off on it? Be professionally responsible? Some decisions require a human in the loop because the decision requires human authority.

Physical reality. Does the task require manipulating atoms in unstructured environments? Robots in fenced-off factory floors are mature. Robots in cluttered warehouses are not.

The insight is that these constraints exist independently of AI capability. They’re features of organizational governance, legal structures, and physical environments. They don’t change just because the model gets better.

The Verification Cost Discovery

We built a framework to evaluate these four constraints across nearly 19,000 tasks spanning 148 million U.S. workers. We weighted all four constraints equally and let the highest constraint dominate: if any single dimension prevents deployment, the task stays with humans regardless of how permissive the other three are.

What emerged surprised us. We didn’t design the framework to prioritize any constraint. But verification cost turned out to predict resistance to deployment more strongly than anything else.

90% of the tasks we classified as immediately deployable have trivial verification costs. You can check the output easily and cheaply. 73% of high-resistance tasks have verification costs that approach or exceed the cost of just doing the work yourself.

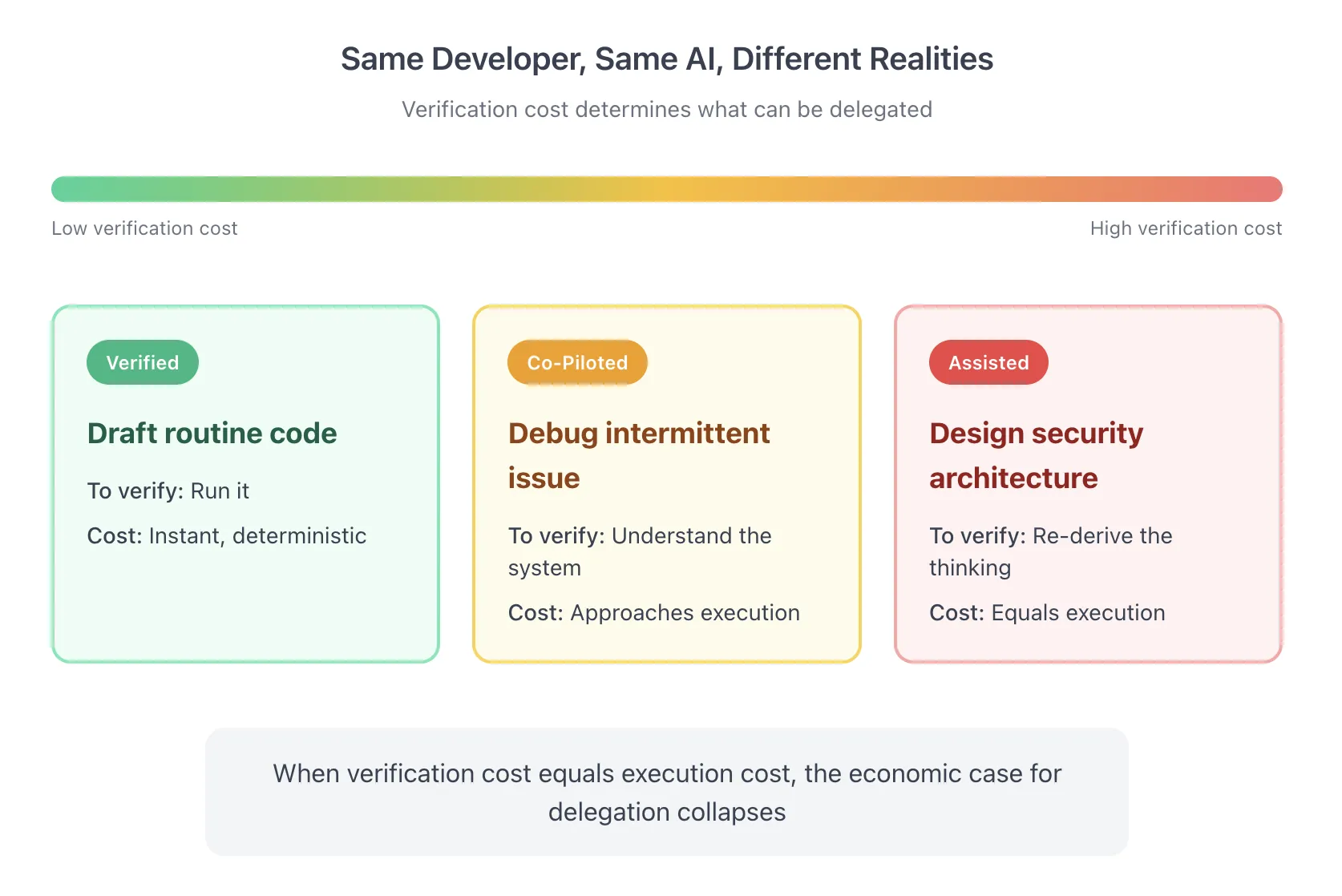

This makes intuitive sense once you see it. Think about a software developer:

Writing a unit test for an existing function. The AI generates the test code. The developer runs it: either it passes or it fails. Verification is instant and deterministic. This is happening everywhere, today.

Debugging an intermittent production issue. The AI suggests a cause for sporadic payment timeouts. But to verify the suggestion, the developer needs to actually understand race conditions, thread pools, and network behavior. Verification requires expertise and approaches execution cost. AI-assisted, not AI-delegated.

Designing security architecture for sensitive data. The AI produces a proposal. But to verify it, the architect must think through every threat model, every compliance requirement, every failure mode. Verification equals re-derivation. No engineering director signs off without redoing the thinking.

Same developer. Same AI capabilities. Completely different deployment realities, driven by verification economics.

The Coordination Overhead Gap

One thing we noticed early: the standard occupational databases systematically under-count “work about work.” Meetings, status updates, documentation, email, searching for information. Microsoft and Asana have both found that 58-60% of work time goes to coordination activities, but the task databases don’t reflect this.

So we enriched the data. We added thousands of coordination tasks and reclassified existing ones, calculating how much of each occupation’s work is coordination. The range: 5% to 75% depending on the role.

Why does this matter? Coordination overhead is often governance-safe. Scheduling a meeting, drafting a status update, searching internal documentation: these tasks have low consequence of error, cheap verification, and no accountability constraints. Even when core work can’t be delegated (a physician can’t delegate diagnosis), the coordination surrounding it often can (insurance authorization, appointment scheduling, documentation).

This unlocks opportunity that pure task-delegation models miss entirely.

What the Numbers Show

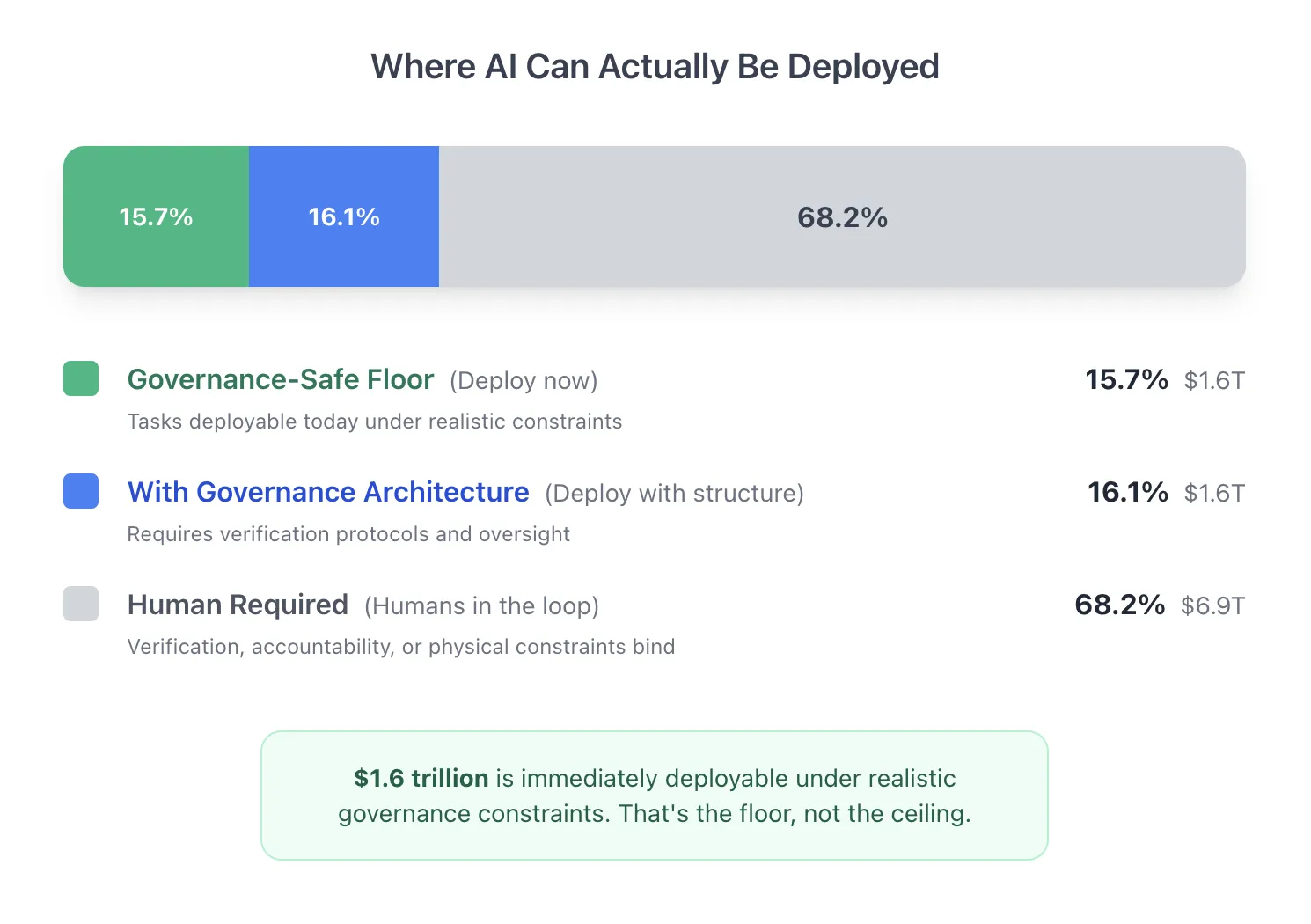

Yesterday we published The Distillation of Work, our new white paper. The headline finding: only 15.7% of work, representing about $1.6 trillion in annual wages, sits in what we call the “governance-safe floor.” These are tasks where organizations can deploy AI today under realistic liability and verification constraints.

That number is realistic, not disappointing. And $1.6 trillion is an enormous opportunity.

Another 16.1% of work can shift toward AI with proper governance architecture: clear verification protocols, appropriate human oversight, well-designed workflows. The binding constraint is organizational readiness, not capability.

The rest requires humans in the loop and on the loop because verification costs are too high, accountability can’t transfer due to regulatory or consequence barriers, the physical environment doesn’t permit automation, or a combination of all of these.

Distillation

Here’s what this means for how work changes:

The tasks that are most eligible to shift to AI are disproportionately routine, verifiable, and low-stakes. The tasks that most need to remain with humans are disproportionately judgment-intensive, high-stakes, and hard to verify.

We call this job distillation. We expect that roles will concentrate around the work that actually requires human judgment, oversight, and accountability. The drudgery work evaporates and is picked up by properly governed AI agents.

For individual workers, we believe this will place a premium on verification skill: the ability to evaluate AI output, to catch errors, and to make judgment calls when the answer isn’t obvious. For organizations, it means the winners will be those who figure out how to structure workflows so AI handles the verifiable pieces while humans focus on what only humans can do.

Where This Goes Next

The framework we built is more than just a national estimate. It’s a measurement system that can scale down to every level.

We can run this analysis by industry, showing where governance-safe deployment concentrates (spoiler: it’s not evenly distributed). We can run it by occupation, by state, by firm size. We can identify where regulatory innovation like Utah’s AI Sandbox is shifting what’s deployable. We can help individual organizations assess their own task portfolios, identifying where they’re leaving safe deployment on the table and where they’re taking on risk they shouldn’t.

If you want to understand where AI opportunity actually concentrates in your context, the realistic deployment frontier rather than the theoretical maximum, that’s what this methodology enables.

And the workforce perspective is just one facet. The same governance constraints apply wherever AI meets organizational process. More on that to come.

Read the Full White Paper

We’ve published the complete white paper with full methodology, validation metrics, and detailed findings. It’s available at seampoint.com/white-paper/.

Special thanks to my colleagues Alan Berrey and Tim Robinson for their pivotal contributions to both the research and the writing.

Seampoint helps organizations identify and capture AI opportunity under realistic governance constraints. If you’re interested in applying this framework to your specific context, reach out on our contact page.

More to read

The Amplification Mindset Changes What AI Is For

Most teams use AI to delegate faster. The overlooked advantage is amplification — extending the reach of human judgment rather than replacing it.

The Hard Lessons of AI in the Call Center

What the race to automate customer care centers reveals about redesigning business processes to make the most of AI

8 Hours with AI and College Football Bias

A BYU fan tests five AI systems on college football rankings. The experiment reveals how bias shapes AI outputs—even when objectivity is the goal.

Where does AI belong in your processes?

Let us map the AI opportunities in your organization today.

Start a conversation